A Brief Bibliographic Review on Euler Neural Networks

Paulo Marcelo Tasinaffo*, Gildarcio Sousa Goncalves, Gabriel Adriano de Melo, Luiz Alberto Vieira Dias and Adilson Marques da Cunha

Computer Science Division, Aeronautics Institute of Technology, Brazil

Submission:April 19, 2019; Published:April 29, 2019

*Corresponding author: Paulo Marcelo Tasinaffo, Aeronautics Institute of Technology, Brazil

How to cite this article: Paulo Marcelo Tasinaffo, Gildarcio Sousa Goncalves, Gabriel Adriano de Melo, Luiz Alberto Vieira Dias, Adilson Marques da Cunha. A Brief Bibliographic Review on Euler Neural Networks. Robot Autom Eng J. 2019; 4(3): 555636. DOI: 10.19080/RAEJ.2019.04.555636

Abstract

Euler Neural Networks are used exclusively for modeling non-linear dynamic systems, with application in control theory. These networks work coupled to the first order integrator of the Euler type. The Euler integrator was initially proposed by the mathematician Leonhard Paul Euler (1707-1783) in 1768. However, it is common knowledge that this type of numerical integrator is used with functions of instantaneous derivatives and its numerical precision is unsatisfactory. On the other hand, when this type of integrator is coupled with universal function approximators (e.g., artificial neural networks, Mamdani- Type fuzzy inference systems, etc.) its accuracy can be improved. The reason for this is that the artificial neural network, because it is a universal function approximator, it can learn the mean derivatives functions of the dynamic system considered, instead of instantaneous function derivatives. This small change makes the precision of the Euler integrator with mean derivatives equivalent to a Runge-Kutta of any order. The only drawback of the Euler Integrator, designed with mean derivatives is that it has a fixed step, while the Euler integrator designed with instantaneous derivatives is of varying step. In the literature, when coupled with a numerical integrator with a universal function approximator, such structures are known as universal numerical integrators. This article proposes to make a rather brief description of Euler Neural Networks coupled with a literature revision, to spread its use in the scientific community, since this structure of neural model structure is little known.

Keywords: NARMAX methodology; Euler neural networks; Euler-type universal numerical integrators; Mean and instantaneous derivatives methodologies; Runge-kutta neural networks; Adams-bashforth neural networks

Introduction

In the last half of the eighteenth century the mathematicians Euler, Lagrange and Laplace contributed significantly to the development and study of differential equations. At that time these studies already included problems of Variational Calculus, Fluid Mechanics, Power Series, Celestial Mechanics, among others. The first numerical method to solve nonlinear ordinary differential equations was proposed by the mathematician Leonhard Euler in 1768, that is, the Euler-type numerical integrators of first order. In 1908, German mathematicians Runge and Kutta developed the fourth-order numerical integrators, which are able to numerically solve the nonlinear ordinary differential equations in a much more precise way than the first integrator proposed by Euler [1-3].

However, using only numerical integrators to solve ordinary differential equations limits the comprehensiveness and applicability of these methods, since they are applicable only to theoretical models of Physics and Mathematics. However, a mathematical model suitable for a real-world plant must also necessarily take into account an input and output pattern data acquisition system. It is known that the most adequate theory to deal with real-world problems is the Least-Squares Theory. This theory was discovered independently by the mathematicians Gauss (a Swiss, in 1795) and Legendre (a French, in 1808) and refined by Kalman (a Hungarian-American, in 1960). Therefore, a way to adequately reconcile the theory of differential equations (predominantly theoretical) with the estimation theory, or least squares, is to use the universal numeric integrators. In Tasinaffo et al. [4] it is mentioned that the universal numerical integrators are divided into three types, which give rise to three distinct methodologies:

(i) NARMAX methodology,

(ii) Instantaneous derivatives methodology, and

(iii) Mean derivatives methodology

Euler Neural Networks are universal numeric integrators of type (iii). The Runge-Kutta neural networks and the Adams-Bashforth neural networks are universal numerical integrators of type (ii). A very important property is that integrators of type (ii) are of varying step, while integrators of type (i) and (iii) are of fixed step type (see [4]). The reason for this is that the instantaneous derivatives functions do not depend on the integration step, but the mean derivatives functions do depend on the integration step.

For an adequate understanding of this article, initially we must say that the design of a Euler Neural Network involves three phases: training, validation and simulation. In the training phase, the neural network effectively learns its goal, from a supervised training with input/output patterns. In the validation phase it is tested with unused training patterns from the neural training phase to verify the generalization of learning. In the simulation phase, it is actually placed to work effectively on what is intended to be done with it, that is, to learn the mean derivative functions. In the general case of universal numerical integrators, the training phase must be performed with a fixed integration step, but in the simulation phase, for some particular cases, it can be performed with a variable step. However, this last statement, regarding step variation, is not valid for Euler Neural Networks.

Evolution of Euler Neural Networks

The Russian mathematician A. N. Kolmogorov solves in 1957 a rather important mathematical problem for the estimation theory. He proved that it is possible to represent any function of n-dimensional space through a linear combination of limited and one-dimensional nonlinear functions. In Hornik et al. [5] adapts the work of Kolmogorov to the particular case of artificial neural networks with feedforward architecture giving rise to the universal approximation of functions. Today the existence of this universal approximation of functions is well established (see [5- 7]). Thus, the theory that allows the representation and modeling of dynamic systems from the artificial neural networks was developed by many authors (e.g., [7-10]). The first methodology that emerged for this purpose was the NARMAX (Non-Linear Autoregressive Moving Average with eXogenous inputs) methodology as proposed in Hunt et al. [7] and Hunt & Sbarbaro [8] in the early nineties of the twentieth century.

In 1998 the researchers Wang & Lin [10] idealized the first scientific paper combining an artificial neural network of RBF (Radial Basis Function) architecture into a numerical integration structure of the Runge-Kutta type of fourth order. They introduced in the literature the Runge-Kutta Neural Networks, or the instantaneous derivatives methodology. In 2010 Melo & Tasinaffo [11] coupled a neural network with Multilayer Perceptron’s architecture in a numerical integration structure of multiple steps of the Adams-Bashforth type. They introduce in the literature the Adams-Bashforth Neural Networks. In Tasinaffo & Rios Neto [12] the Adams-Bashforth Neural Networks are coupled in a predictive control structure for orbit transfer control to solve an aerospace engineering problem. It is important to note that the instantaneous derivative methodology allows the control of a physical system by varying the integration step in the simulation and control phase. If the NARMAX methodology or the Euler Neural Networks were used for this purpose, it would not be allowed to vary the integration step during the simulation and control phase.

The Euler Neural Networks first appeared in Tasinaffo [13] and were inspired by the first order Euler integrator, which first appeared in the History of Mathematics in Euler [14]. Reference Tasinaffo [13] was an idealized doctoral thesis at the National Institute of Space Research, located in Brazil. Unfortunately, this doctoral thesis was originally written in Portuguese. The term Euler Neural Network does not appear in this thesis. It is still used the term “mean derivatives methodology”. The term Euler Neural Networks will appear for the first time in Tasinaffo [4]. Thus, in references Tasinaffo [13] and [15-19] where it is read the “mean derivatives methodology” it can be understood as a synonym for Euler Neural Networks. In Tasinaffo [4] a universal numerical integrator it is mathematically defined. In Tasinaffo [4] it is also where the term universal numerical integrator appears for the first time. The main difference (and of fundamental importance) between a conventional numerical integrator and a universal numerical integrator is that the former has greater precision the higher its order, whereas the latter must have the same precision regardless of its order (see [11]).

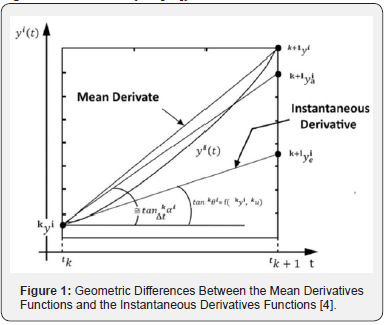

The explanation for understanding this is relatively simple. For example, a high-order Runge-Kutta universal numerical integrator (see [10]) can learn very accurately the functions of instantaneous derivatives and in the simulation phase (after the supervised training phase) can be varied its integration step, since the functions of instantaneous derivatives are independent of the step of integration. However, a Euler-type universal numerical integrator must necessarily learn the mean derivatives functions to override the integrator error (see Figure 1) and no longer the instantaneous derivatives function. As can be seen in Figure 1, if the Euler-type universal numerical integrator learns the function of instantaneous derivatives it does not cancel the learning error and ceases to be a universal approximation. Thus, the Euler- type universal numerical integrator cannot vary the integration step in the simulation phase because the mean derivatives functions depend on the integration step that is in the interval [tk, tk+1] (see Figure 1 again). An intermediate-order universal numerical integrator, for example, a second-order Adam- Bashforth, will have to learn something intermediate between the mean derivatives functions and the instantaneous derivatives functions to nullify its error (see [11]). Therefore, these intermediate-order universal integrators are likely to have problems of varying the integration step in the simulation phase but will still be highly accurate [4].

In Tasinaffo & Rios Neto [15] a brief description of the Euler Neural Networks can be found, with problems for application in Aerospace Engineering. In Tasinaffo & Rios Neto [16] the Euler Neural Networks are applied in a predictive control structure proving its efficiency and applicability in Control Theory. There is still no application of Euler Neural Networks in adaptive control structures nor in IMC (Internal Model Control) structures. In Tasinaffo [17] it is perhaps the main reference existing in the literature on the Euler Neural Networks. In this paper it is mathematically demonstrated that the Euler integrator, designed with mean derivatives functions, is an exact and discrete solution for autonomous non-linear dynamic systems. A summary of this mathematical proof can also be found in Tasinaffo [15]. In Figueiredo et al. [18] the Euler-type universal numerical integrator is designed, using a Mamdani-Type fuzzy inference system proving its generalization capacity. In Tasinaffo [19] a continuous approach is proposed for Euler universal integrators designed with mean derivatives. However, this approximation is only valid for non-linear dynamics without the presence of control variables.

We can say that the first work on universal numerical integrators is credited to Wang & Lin [10]. There is also a very interesting work by Lagaris et al. [20], dealing with the resolution of ordinary and partial differential equations through artificial neural networks. However, before 1997 there is nothing about universal numerical integrators. Before 1997 there are many papers involving only high order numerical integrators and many other works involving only universal approximations of functions, but articles combining these two mathematical concepts does not exist at all. The application of these universal integrators in Control Theory can be wide and very promising, although there are only three articles in this respect (see [12,16,21]). The first theoretical discussion about the possibility of using universal numerical integrators in Control is presented in Rios Neto [21]. References Tasinaffo & Rios Neto [12,16] are already able to present practical experiments. Comparing references Tasinaffo & Rios Neto [12,16] it is easy to see that the control policy, estimated for particular problems involving Aerospace Engineering, is noisier in the mean derivatives methodology than in the instantaneous derivatives methodology. However, the authors of this article do not have a plausible explanation for this. There is also a very recent and interesting article (see [22]) in which it is also mentioned the use of the Euler and Runge-Kutta universal integrators coupled in neural networks for the adequate treatment of nonlinear dynamic systems. Finally, for an in-depth mathematical understanding of Euler Neural Networks, it is necessary at least to read the following references (preferably in this order): [4,15-19] that is, from the easiest to the most difficult reference. To conclude this section, it should be mentioned that articles involving universal numerical integrators of type (ii) and (iii) are very rare.

Conclusion

The main advantages of using a numerical integrator with a universal approximation of functions are

a) To allow variation of the integration step in the neural simulation phase and

b) To facilitate the training of the neural network used.

However, only higher order integrators satisfy item (a). Therefore, Euler-type universal numerical integrators do not allow step variation but are easier to train than the NARMAX methodology. In addition, some interesting benefits and/or properties of the Euler-type universal integrator and its closest competitor (NARMAX Methodology) are:

a. When the integration step tends to zero, the mean derivatives functions tend to the instantaneous derivatives functions.

b. The mean derivative is (see Figure 1), by definition, the secant of the interval [tk, tk+1] in curve yi (t) and at the same time is the discrete and exact general solution for the dynamic system y = f ( y,u) .

c. The general solution given by the mean derivative functions in the Euler integrator, for the system of nonlinear ordinary differential equations, is discrete and exact. However, the empirical determination of the mean derivatives functions through any universal approximation of functions is approximate, but always possible within a desired error.

d. The Euler-type universal numerical integrator designed with mean derivatives functions has many applications in real world problems, for example, it can be used to predict river flows in river basins or to predict the formation of sunspots from data obtained from observations of the past.

e. The Euler-type universal numerical integrator with mean derivatives functions can be designed within an error with acceptable accuracy through supervised training with input/ output patterns using some kind of universal approximation of functions such as artificial neural networks, fuzzy inference systems, paraconsistent inference systems, among many others.

f. The major disadvantage of universal numerical integrators is that the resulting mathematics is more complicated because it involves two distinct mathematical concepts, namely, Numerical Integration Theory and the Theory of Universal Approximation of Functions.

g. The mathematical theory of Euler-type universal numerical integrators is quite simple, since this integrator is of the first order.

h. There is a very significant difference between the instantaneous derivatives and the mean derivative functions. The instantaneous derivative functions are a purely theoretical and mathematical concept, while the mean derivative functions are a purely physical, empirical, and experimental concept. The mean derivative functions can be determined directly from a data acquisition system performed by a computer connected to a real-world plant, whereas the instantaneous derivative functions can only be determined indirectly.

i. The authors of this paper have not yet come to a consensus whether the mean derivative methodology is an analytical method or a numerical method. It is analytical because it is accurate, but it is also numerical because it is discrete. The only discrete and exact method we know of is this. However, to determine the mean derivative functions in practice and in an exact way is a utopia and completely impossible, but with the help of a universal approximation of functions its accuracy can always be less than a desirable maximum error and always not zero. Since it is necessary to use a computer for this calculation, this methodology can be considered predominantly numerical.

j. The integration step of Euler neural networks need not be infinitesimal and not very small. The integration step can be any, even large. However, the longer the integration step, the more difficult and time-consuming will be the neural training.

k. The only methodology for modeling dynamic systems through artificial neural networks widely diffused in the literature is the NARMAX methodology. There are several scientific articles on this, which uses this methodology widely in predictive control, adaptive control and in IMC structures.

However, using the technology of universal numerical integrators with conventional neural networks such as MLP and RBF networks is not really very stimulating because the numerical convergence of the decay of the mean square error is slow and therefore requiring a lot of numerical processing time and stoppage at undesirable local minima. On the other hand, using them with emerging new technologies of neural networks such as Deep Learning Neural Networks can really offer a future full of expectations, especially in Neurocontrol.

Acknowledgment

Initially, we would like to thank the Managing Editor Grace Nicholas of the Robotics & Automation Engineering Journal, who invited us to publish this article with them. We would also like to thank the Casimiro Montenegro Filho Foundation (FCMF) and, the Ecossistema Negocios Digitais Ltda for their financial support of this research. Finally, we would like to thank God for giving us the intelligence to conduct this research.

References

- Henrici P (1964) Elements of numerical analysis, John Wiley & Sons, New York, USA, pp. 329.

- Lapidus L, Seinfeld JH (1971) Numerical solution of ordinary differential equations. Academic Press, New York, USA.

- Lambert JD (1973) Computational methods in ordinary differential equations. John Wiley & Sons, New York, USA.

- Tasinaffo PM, Gonçalves GS, da Cunha AM, Dias LAV (2019) An introduction to universal numerical integrators. International Journal of Innovative Computing, Information & Control 15(1): 383-406.

- Hornik K, Stinchcombe M, White H (1989) Multilayer feedforward networks are universal approximators. Neural Networks 2(5): 359-366.

- Cotter NE (1990) The Stone-Weierstrass and its application to neural networks. IEEE Transactions on Neural Networks 1(4): 290-295.

- Hunt KJ, Sbarbaro D, Zbikowski R, Gawthrop PJ (1992) Neural networks for control systems - A survey. Automatica 28(6): 1083-1122.

- Hunt KJ, Sbarbaro D (1991) Neural networks for nonlinear internal model control. IEE Proceedings-D 138(5): 431-438.

- Chen S, Billings SA (1992) Neural networks for nonlinear dynamic system modelling and identification. Int J Control 56(2): 319-346.

- Wang Y-J, Lin C-T (1998) Runge-Kutta neural network for identification of dynamical systems in high accuracy. IEEE Transactions on Neural Networks 9(2): 294-307.

- Melo RP, Tasinaffo PM (2010) Uma metodologia de modelagem empírica utilizando o integrador neural de múltiplos passos do tipo Adams-Bashforth. Sociedade Brasileira de Automática (SBA)21(5): 487-509.

- Tasinaffo PM, Rios Neto A (2019) Adams-Bashforth neural networks applied in a predictive control structure with only one horizon. International Journal of Innovative Computing, Information & Control 15(2): 445-464.

- Tasinaffo PM (2003) Estruturas de integração neural feedforward testadas em problemas de controle preditivo. Instituto Nacional de Pesquisas Espaciais (INPE), São José dos Campos/SP, Brazil, pp. 1-230.

- Euler LP (1768) Institutiones calculi integralis, St. Petersburg.

- Tasinaffo PM, Rios Neto A (2005) Mean derivatives based neural euler integrator for nonlinear dynamic systems modeling. Learning and Nonlinear Models 3(2): 98-109.

- Tasinaffo PM, Rios Neto A (2006) Predictive control with mean derivative based neural euler integrator dynamic model. Sociedade Brasileira de Automática (SBA) 18(1): 94-105.

- Tasinaffo PM, Guimarães RS, Dias LAV, Júnior VS, Cardoso FRM (2016) Discrete and exact general solution for nonlinear autonomous ordinary differential equations. International Journal of Innovative Computing, Information & Control 12(5): 1703-1719.

- Figueiredo OM, Tasinaffo PM, Dias LAV (2016) Modeling autonomous nonlinear dynamic systems using mean derivatives, fuzzy logic and genetic algorithms. International Journal of Innovative Computing, Information & Control 12(5): 1721-1743.

- Tasinaffo PM, Guimarães RS, Dias LAV, Júnior VS (2016) Mean derivatives methodology by using Euler integrator improved to allow the variation in the size of integration step. International Journal of Innovative Computing, Information & Control 12(6): 1881-1891.

- Lagaris IE, Likas A, Fotiadis DI (1997) Artificial neural networks for solving ordinary and partial differential equations. Internal report, University of Ioannina, Greece.

- Rios Neto A (2001) Dynamic systems numerical integrators in neural control schemes. V Congresso Brasileiro de Redes Neurais, Rio de Janeiro-RJ, Brazil, p. 85-88.

- Chen RTQ, Rubanova Y, Bettencourt J, Duveand D (2018) Neural Ordinary Differential Equations, 32nd Conference on Neural Information Processing Systems (NeurlPS), Montréal, Canada, pp. 1-19.