Framework for Human-Like Reasoning for Agents Operating in the Internet of Things

Michael E Farmer*

University of Michigan Flint, USA

Submission:June 28, 2019; Published:July 10, 2019

*Corresponding author: Michael E Farmer, University of Michigan Flint, Flint, USA

How to cite this article: Michael E Farmer. Framework for Human-Like Reasoning for Agents Operating in the Internet of Things. Robot Autom Eng J. 2019; 4(4): 555642. DOI: 10.19080/RAEJ.2019.04.555641

Keywords: Dempster shafer; Evidential reasoning; Kalman filter

Abbreviations: DS: Dempster Shafer; IoT: Internet of Things

Introduction

Integrating evidence from a single sensor over time is becoming more common due to the Internet of Things (IoT). In a typical embedded system a sensor is measuring a particular set of values over time. Raw sensor data is often integrated over time using the Kalman filter. Some sensors, however, output classifications and probabilities over time rather than values that generate sequences of beliefs. Integration of beliefs has tended to rely on approaches such as Bayes and Dempster-Shafer (D-S). While these methods are well founded for sensor fusion across multiple sensors, their adoption to integrating beliefs from single sources over time is not. There are many issues with using traditional probabilistic approaches, including:

i) They are order independent

ii) They are not designed for dynamic environments

iii) They are not well-suited for assignable errors in the sensor measurements.

All of these issues may cause significant evidential conflict and errors. We have been developing an alternate approach for sequential evidence accumulation that integrates the set theoretic nature of Dempster-Shafer theory with Kalman-based estimation. This approach is motivated by traditional signal processing and human psychology. These models were shown effective and this paper will show how to implement forgetting which can be useful in dynamic settings when sensor drop-outs occur.

Human Like Reasoning Temporal Fusion

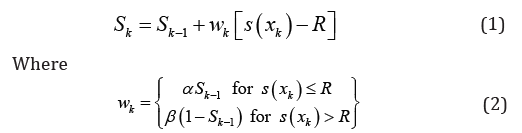

Powell, Roberts and Stampouli in [1]: “Existing belief function fusion methods tend to not take advantage of the temporal information and look at the situation at a single time step.” Farmer addressed the benefits of adopting a sensor fusion methodology based on human cognition in [2]. Hogarth and Einhorn [3] being the definitive model. Their model is defined as [3]:

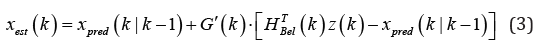

and Sk is the current level of belief, Sk-1 is the belief at the last update, S(xk) is the new evidence input into the system, and α and β are weights [4]. The beliefs based Kalman estimator is defined by [2]:

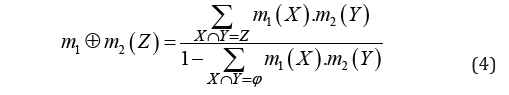

Notice the structure of this equation parallels Hogarth and Einhorn in Equation (1) where the weights wk replace the Kalman gain, G(k). Notice how dramatically different this equation structure is from a traditional conditioning frameworks such as provided by Bayes with p2(A) = p1(A|E), where E is the new evidence or provided by D-S [5]:

where X, Y and Z are the elements of the power set ()PΘ of all the hypothesis options.

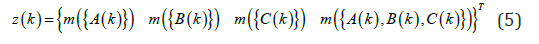

In this new Kalman belief formulism the state vector is the complete set of D-S beliefs. The measurement vector is the incoming set of probability masses augmented with the mass of complete ignorance to prevent systems from converging too quickly in its beliefs [6]. Thus, the measurement vector for a 3-atomic beliefs problem is:

The details for the calculation of the gain matrix, and the form of the measurement matrix are in [2].

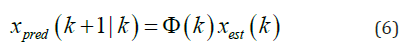

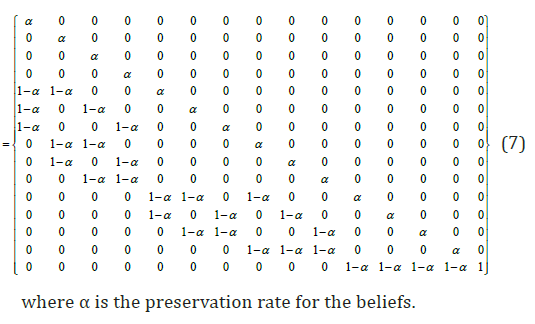

where xest(k) is the estimated state vector for the system at time k, and Φ(k ) is the state transition matrix describing the dynamics of the system.

The state transition matrix is novel compared to that of dynamic systems where the underlying Newtonian dynamics is captured. In the dynamic belief environments often encountered by sensors in an IoT setting, there is potentially a need for the system to forget its past beliefs. Extended periods with no incoming evidence may require that the system assume a less committed set of beliefs, i.e. the system will forget its belief set over time.

We propose a state transition matrix to support forgetting. This is accomplished by flowing beliefs to higher cardinality belief sets that are more general hypotheses, and hence have forgotten details. It takes the following form:

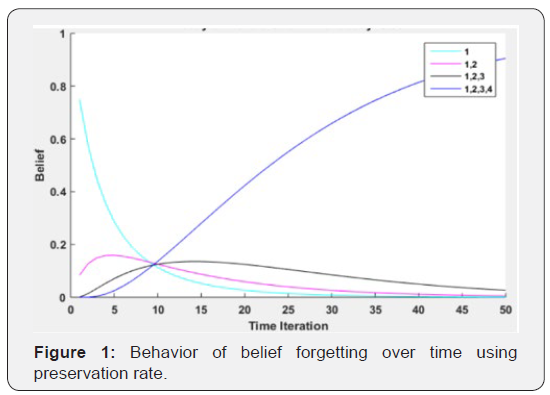

Forgetting is not a processing function normally considered in developing artificially intelligent systems, however, Ricker, et al. demonstrated that short-term human visual memory recall rates reduce over time [6]. Likewise, many long-term human memory recall models also demonstrate recall decay [6]. This need for forgetting can arise due to changes in the environment or due events such as sensor dropouts, network loss, obscuration of the target in the environment, etc.

Figure 1 shows how an initial set of evidence flows into higher cardinality sets and ultimately into the set of complete ignorance. The rate of decay of beliefs can be tailored for the specific application by the decay rate. As it approaches zero the system defaults non-forgetting.

Conclusion

This paper provides a model for allowing artificially intelligent systems to forget, or relax, their beliefs. Beliefs are advanced based on a Kalman filter formulism, and the state transition martrix is shown to be an effective mechanism for supporting forgetting. Forgetting plays a critical role in human cognition. Sensors embedded in distributed networks in dynamic environments can benefit from belief relaxation or forgetting.

Conflicts of Interest

There are no conflicts of interest.

References

- Powell G, Roberts M, Stampouli D (2012) Improvements to GRP1 Combination Rule. Belief Functions: Theory and Applications 164: 293-300.

- Farmer ME (2017) Sequential evidence accumulation via integrating Dempster-Shafer reasoning and estimation theory. Intelligent Systems Conference. pp. 90-97.

- Hogarth RM, Einhorn HJ (1992) Order effects in belief updating: The belief-adjustment model. Cognitive Psychology 24(1): 1-55.

- Cossart C, Tessier C (1999) Filtering versus revision and update: Let us debate!. European Conference on Symbolic and Quantitative Approaches to Reasoning with Uncertainty. pp. 116-127.

- Shafer G (1976) Filtering versus revision and update. Princeton University Press.

- Ricker TJ, Spiegel LR, Cowan N (2014) Time-based loss in visual short-term memory is from trace decay, not temporal distinctiveness. Journal of Experimental Psychology: Learning, Memory and Cognition 40(6): 1510-1523.

- Gelb A (1974) Applied Optimal Estimation. Cambridge: MIT Press.