Study of Perceptual Effects of Auditory Ecology on Phoneme Perception among Individuals with Mild to Moderate Sensori Neural Hearing Loss

Avinash Krishnamurthy*, Suresh Thontadharya, Prajna Bhat and Sreelekha Dudekula

Samvaad Institute of Speech and Hearing, Hebbal, India

Submission: February 21, 2017; Published: March 09, 2017

*Corresponding author: Krishnamurthy, Samvaad Institute of Speech and Hearing, Hebbal, Bengaluru, India, Tel:9620224326;Email: avi.krishnamurthy@gmail.com

How to cite this article: Avinash K, Suresh T, Prajna B, Sreelekha D. Study of Perceptual Effects of Auditory Ecology on Phoneme Perception among 002 Individuals with Mild to Moderate Sensori Neural Hearing Loss. Glob J Oto 2017; 4(5): 555649. DOI: 10.19080/GJO.2017.04.555649.

Abstract

Backround: Speech signals are known to be altered to a significant level by background noise, reverberation, and less than desirable specifications in frequency and temporal responses of communication channel. Everyday noises in an individual’s own acoustic environment is a heterogeneous group and dynamic in that it changes throughout the day as well over a period of days/months. Therefore impact of these everyday noises on speech perception in individuals with normal hearing and hearing impairment is not easy to predict from clinical measures of word recognition scores. Effect of noise on word recognition score (WRS) is routinely measured using Speech in noise (SPIN) test, where in the competing signal is the audiometric noise. Hence, the present study aims to assess speech perception of an individual with hearing loss as measured using recorded noises which are representative of typical noises encountered by these individual’s in their daily routine.

Material and Method: The participants included 15 adults with bilateral mild to moderate hearing loss in the age range of 30-50 years. Detailed Audiological evaluation was carried out on all participants prior to the study to ensure compliancy to the selection criteria. Real world noises were recorded at locations which are frequented by the subjects in their daily life. This was done by a prior study (Sreelekha, 2014) using survey and questionnaire tool and all daily acoustic environment were tabulated on a frequency measure. Recording of these reported real world noises was done using a Sound Level Meter (SLM) with a condenser microphone connected to a laptop computer and software for audio recording. The speech perception was measured by using standard phoneme perception test in kannada (devaki..) and mixing these noise levels to the test materials at predetermined SNR level, i.e 0dB and +10dB SNR (Signal to Noise Ratio).

Results: Results showed that certain noises like traffic noise effectively reduced speech perception ability more significantly than other noises. The effect was more for 0dB SNR than for +10dB SNR. The result suggests that not only overall amplitude of noise spectrum, the spectro-temporal distribution of energy also plays a key role in masking the cues for consonant perception of hearing impaired individual.

Conclusion: The results of the current study reflects the importance of auditory ecology in understanding the acoustical world that an individual with hearing impairment is exposed to and how our intervention strategy must be changed depending on this.

Background

Auditory ecology refers to the relationship between the acoustic environments in which people live and their auditory needs in these environments. Gatehouse et al. [1] introduced the term auditory ecology to refer to the listening environments in which people are required to function, the tasks to be undertaken in these environments and the importance of these tasks in everyday life. Knowing the exact acoustic features of these surrounding sounds would help in choosing individualized listening programs or algorithms. Speech signal can be altered by background noise and other interfering signals such as reverberation, imperfection of frequency and temporal responses of the communication channel. In spite of these alterations in speech signal, human listeners are able to pay attention to one voice amidst other conversations and background noise due to the presence of a phenomenon referred to as the “Cocktail party” effect [2,3].

This adaptability to changing acoustical environment and an ability to perceive speech even when competing signals are present is severely compromised in an individual with hearing impairment. Among different speech sounds, consonants are acoustically weaker and susceptible to effects of noise. Noise masks the weak consonants to a greater degree than the higherintensity vowels. However, unlike reverberation this masking is not dependent on the energy of the preceding segments [4]. These consonants differ in terms of place of articulation and voicing. For example: the perception of /b/ and /p/ can be confusing because they require precise temporal resolution, where the time interval between different segments (e.g.VOT) needs to be distinct and accurate to msec in order for these sounds to be discriminated. There are a couple of studies which have investigated the effect of age on the ability to recognize speech in noise. Speech in noise abilities of adults and children was studied by Eisenberg et al. [5].

The results indicated children between the age ranges of ‘10-12’ years had better scores than those between the age range of ‘5-7’ years, which they attributed to utilization of sensory information and linguistic and cognitive developmental factors. Studies of speech perception abilities in individuals with hearing impairment have been carried out in carefully controlled experiments. They help us in understanding the processes and factors involved but fail to highlight the relative degree of difficulty experienced by them in real world. Studying the effects of real world listening environments on speech scores are becoming a subject of interest as of late. This is partly due to improvements in hearing aid technology. Some of the hearing aids have features like data logging, automatic switching of programs which gets activated when noise is detected etc.

Therefore audiologist would be entrusted with additional responsibility of verifying the appropriateness of these features and as well as taking additional steps in customizing the hearing aid output. In Indian context, there is a dearth of such studies. Most of the studies carried out in Indian scenario have reported the effects of noise on speech perception among individuals with hearing impairment with respect to the white noise. And also literature review suggests that studies that catalogs the typical noises an individual with hearing impairment encounters in his daily life are lacking as well. It is a common knowledge that the overall noise levels experienced hearing impaired are higher in India than those reported in western literature and also the way it impacts communication would be different. Hence the current focus of the study was to understand the effect of real world noise on speech perception in individuals with mild to moderate degree of sensorineural hearing loss.

Aim and objective of the study: To understand the effect of real world noise on speech perception in individuals with mild to moderate degree of sensorineural hearing loss individuals. This is a preliminary study, an attempt at understanding real world difficulties of adults with hearing impairments. Auditory ecology, a novel idea to India may help us in future to understand the acoustical environment around us.

Method

Participants: 15 individuals with bilateral symmetrical sensorineural hearing loss in the age range of 30 to 50 years werethe subjects of the study. The duration of hearing loss was more than 5 years. All participants were native Kannada speakers residing and working in Bengaluru, India having similar socioeconomic status. Of the participants 9 were females and 6 were males. They were all tested in double sound treated rooms using Maico MA 53 audiometer with TDH 49 and BC 71 transducers. The audiometer was calibrated according to ISO standards within last 6 months. The hearing levels were measured and degree was calculated using ASHA standards.

All participants had undergone detailed audiological evaluation in the past which included Impedance audiometry, electrophysiological testing. Individuals diagnosed or flagged for meniere’s disease or auditory neuropathy or tumor were excluded from the study.

The criteria for subject selection were as follows:

A. Inclusive Criteria:

- Degree of hearing loss between mild to moderate

- Mother tongue being the same.

B. Exclusive criteria:

- Ski slope pattern was avoided.

- Mother tongue being different.

- Minimal and above moderate degree of hearing loss will be avoided.

Test material

Speech perception ability was measured using a nonsense syllable list. The syllables were recorded using a female speaker (native speaker of Kannada) and later real world noises were added to this. The real world noises used in the study was developed by Sreelekha [6] as part of unpublished master’s dissertation. Prepared Speech in Noise test material was used as a tool to measure speech perception in noise. Two different SNR conditions were chosen to represent adverse listening condition and a favorable listening condition (0dB and + 10 dB SNR). Stimuli were 20 nonsense syllables in VCV order. Both vowels were /a/, and the Consonant differed among 20 items. The consonants were common consonants of Kannada language. The target word was embedded in a sentence to reduce inflection or emotional intonation.

Recording of the 20 words were made using native Kannada female speaker. Three audiologists with 5 yrs or more experience in teaching a graduate course were asked to evaluate the recordings for quality and naturalness. Later, PRAAT software [7] was used to select the target word and separate audio files were made for each of the words. All the words were equalized for loudness and scaled to 75 dB SPL using “scale” menu option on PRAAT. Noise used were samples from the real world noise situation and were recorded using Sound Level Meter (SLM). The Noise samples included were restaurant noise, temple noise, road traffic noise, traveling in bus noise, auto noise and white noise. The selection of noises were based on unpublished dissertation of Sreelekha [6] where in a checklist was used to find out most common listening situations of a hearing impaired individual in a city of Bangalore. The noise samples were also scaled in similar fashion to the target words. Words were mixed with the noises using Adobe Audition version 3.0. Two separate sets were prepared, 0 dB SNR and +10 dB SNR.

Instrumentation and procedure

Audiometry evaluation and Immittance measurement were done using calibrated audiometer & middle ear analyzer to know the individual’s hearing loss without hearing aids and middle ear physiologic state. Later speech perception test was done using speech in noise test material prepared as explained earlier for each of noise types (white noise, traffic noise, temple noise, restaurant noise, in bus and in auto) at two SNRs (0dB & + 10 dB SNR).

Statistical Analysis

The results of speech perception in noise were subjected to statistical analysis. The MANOVA was applied to know major group factor effect (type of noise on speech perception scores) and between subject effect. The Duncan T3 post hoc test administered to know which pairs of noises were statistically significant for mean difference. Summary of central tendencies were also calculated.

Results

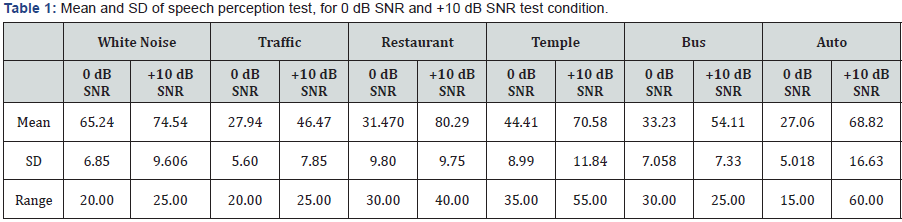

The objective of the present study was to understand the effects of exposure of real world noises in the everyday life of adults with hearing impairment by their speech perception scores. Also comparison of speech perception scores obtained by white noise & using each of the everyday noise selected for the study was carried out. The Comparison of word recognition scores obtained by individuals with hearing impairment in different competing signals. The results as in Table 1 indicate that at +10 dB SNR condition, the mean scores for each noise condition was above 65 %. The participants obtained poor scores on the tasks with traffic noise (46.47%) and bus noise (54.11%). At 0 dB SNR, scores were poor across all types of noise conditions. At + 10 dB SNR, only restaurant noise and noise during auto travel showed higher scores (by 15 %) than that of white noise. At 0 dB SNR, the scores obtained for all real world noises were poorer than white noise.

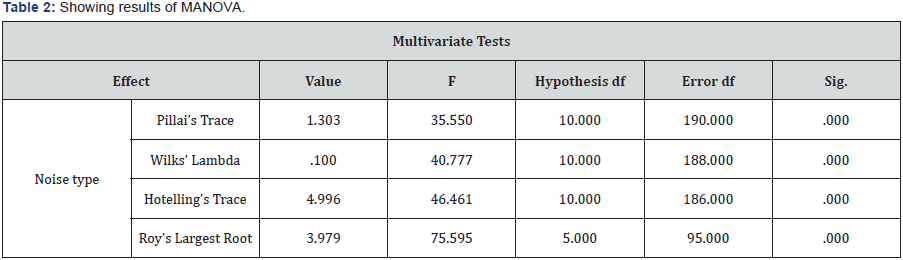

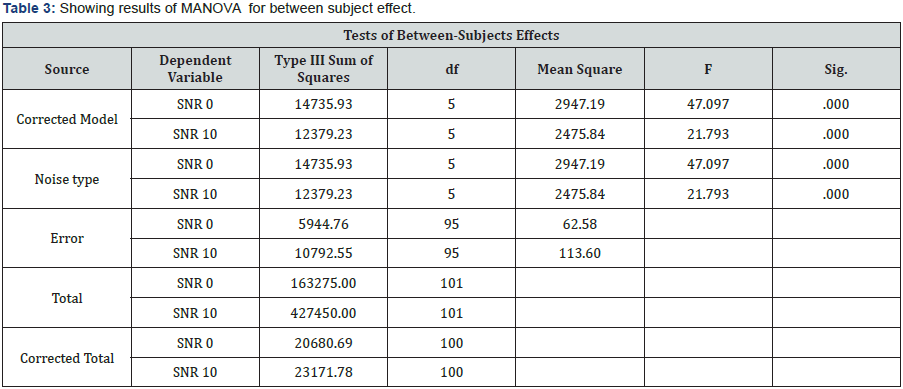

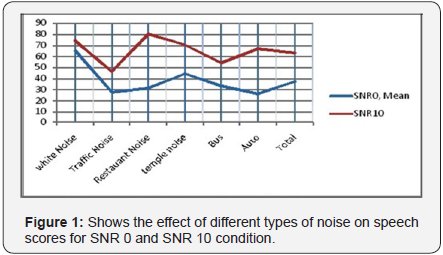

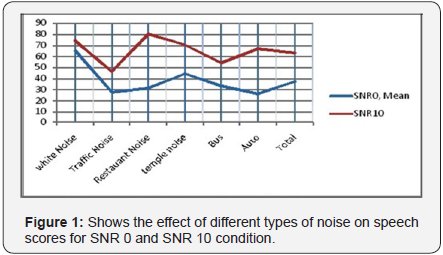

Test of statistics, MANOVA showed the major effect, the type of noise on speech perception score to be statistically significant difference (at 0.000 level). Further, between group effect also showed significance (at 0.000 level). The F value and significance value are given in Table 2. Further statistical analysis was done to know which SNR groups were affected by the noise type. Table 3 shows significant effect of independent variable i.e. noise type (sig level of .000 and .000 respectively) in both SNR 0 and SNR 10 conditions. The mean values of speech scores across different noise types for both SNR conditions are depicted in Figure 1. The overall scores were better for the +10 dB SNR condition and poor for 0dB SNR, the adverse listening condition. This effect was uniformly seen for all speech scores of all types of noise.

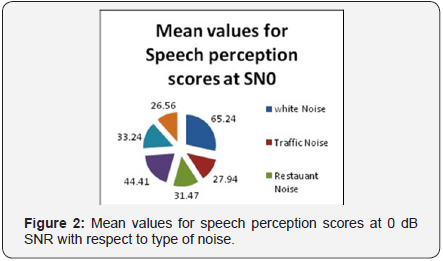

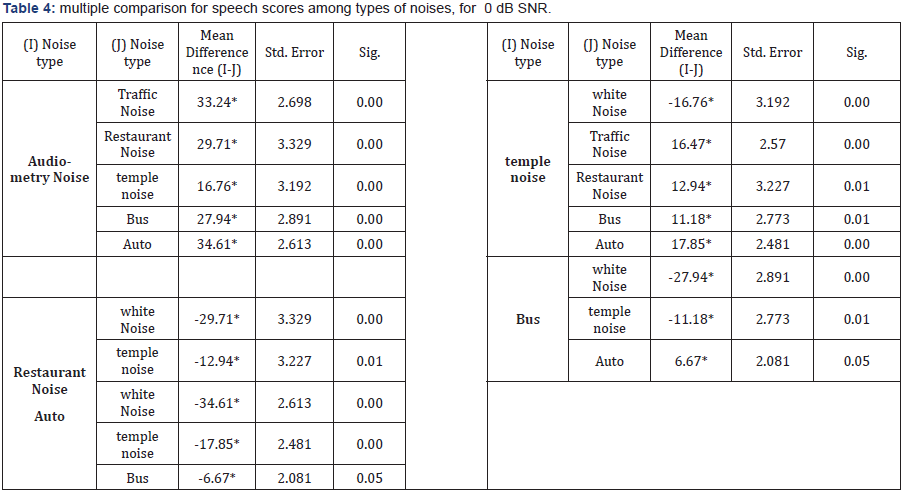

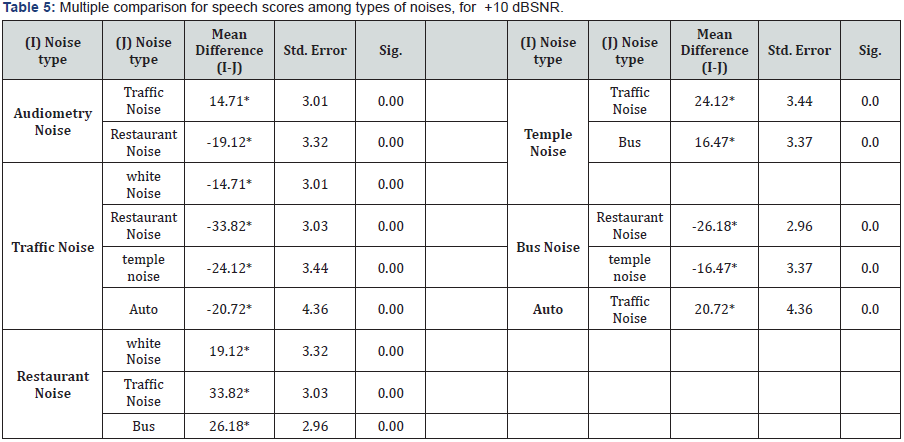

The Figure 2 and Figure 3 show the mean values for speech perception scores at 0 dB and + 10 dB SNR respectively with respect to different types of noise. Duncan T3 post hoc analysis was later carried out to know which groups of noise types contributed to the significant values seen on MANOVA. The pair of groups of dependent variable showing statistically significant difference is shown in Table 4 and Table 5. The pairs which did not show significant difference were not included for ease of viewing.

For 0 dB SNR condition, speech scores obtained for white noise differed statistically from all other noise types. Temple noise also showed significant difference from all other type of noises. The mean scores for the above two were higher than for the other noise types (65 % & 44% respectively). The mean scores for traffic noise was at 27.94 %. Further the bus and auto noise conditions also showed significant difference in values between them (mean values 27.1 % & 33%).Though both bus and auto mode of transport shared common noise source (road traffic noise being same) the speech perception scores were affected differently. This may be due to differences on spectral distribution seen earlier on the 1/3rd octave analysis. The speech scores do not depend on overall intensity level of noise but also on their frequency distribution characteristics.

For+10 dB SNR, speech scores obtained for audiometry noise differed statistically from only restaurant and traffic noise. This is very different pattern from that of 0 db SNR condition. Scores for task with Temple noise on the other hand was significantly different in its mean scores from that involving traffic and Bus noise types. The subjects did poorly for tasks with traffic noise(at 46%, mean score) and these scores differed statistically from scores of tasks with all other noise types. On the other end of range, subjects did get best scores for task with restaurant noise (at 80% mean scores), better than that for white noise as well. Subjects showed also a different trend for tasks with auto noise for +10 dB SNR condition. Scores for task with auto noise differed from scores of tasks with traffic noise alone, a pattern unlike that of 0 dB SNR.

Discussion

Acoustic environment of an individual is complex and also varies throughout the day. An individual may not be in a static auditory environment whole day. Each subject of the present study were exposed to varied types of acoustic environment in a single day and also duration of exposure of each type of noise varied as well. Some commonality in their acoustic environment was delineated using a diary record keeping in a previous study by Sreelekha. In a typical situation, where in an individual with hearing impairment travels to the audiology facility (e.g the institute where the study was conducted), he / she would be exposed to road traffic noise while waiting for bus and later to the noise in bus, and then the noise at the waiting area of the audiology facility. While sound levels varied, the subject’s primary goal remained the same, i.e. understanding conversations in each of these places [6]. This primary goal was not satisfactorily addressed by the hearing aid they were prescribed and currently using. Additionally listening to announcements in public transport system also was difficult to them.

Consonant perception scores varied when each of these different acoustic noises were used as masking signal. This highlights the difficulty an individual with hearing impairment encounters in their most important transaction activity of everyday life. So far while designing the amplification devices, performance of hearing impaired on speech task that utilized a white noise or speech babble noise situations are often considered. Even classification of auditory environment may often fall short when applied to Indian context. E.g, home environment is considered to be quiet, party situation to be noisy etc. Importance of talker position in conversation is often under estimated. The assumption is that people talk one to one in most situations and mostly face the talker. While in real life situation, even at home, people tend to talk from different directions, not always standing close to the person with hearing impairment [6]. Therefore the present study highlighted the variations in improvement in speech perception ability that an individual with hearing impairment obtains while using the hearing device recommended to him or her.

Multi memory or multi programming hearing aids probably offer much needed flexibility. The additional feature of adaptable hearing aids, where in they detect the noise activity and adjusts gain parameters, accordingly needs to be explored in detail in future. The algorithms these automatic hearingaids use to classify the acoustic ecology should be appropriate for auditory ecology of the subject. Currently, the classification would probably be inaccurate in identifying the situational acoustics. Most quiet surroundings may be noisy and when one moves noisy to severe noisy conditions, hearing aid may make inadequate changes to gain parameters.

Cruckley J, et al. [8], in their study of auditory ecology of school going children noted that multi memory hearing aids may better suit the children in school. Their advantage may supplement the use of FM system as well. The noise levels varied in level and frequency distribution when children moved from classroom to corridor to cafeteria or playground. As a result, hearing aids were not able to keep up with the changes. Results of speech perception scores may help us to notice the listening situations which are friendly for an individual with hearing impairment and which are not. The five real world noises were mixed with non sense syllables and presented to hearing impaired for identification of the stimuli. The results show at +10 dB SNR condition all subjects were able to get 65 % and above scores across irrespective of type of noise used for stimulus. At 0 dB SNR, scores were down to 35% to 40%.

As real world noises tend to fluctuate in energy distribution over time, some amount of masking release may occur. Study done by Howard-Jones PA, Rosen S [9] and George [10] showed that individuals with hearing impairment were unable to use this modulation information and scores were not better when compared to their scores for narrow band noises. In the present study, participants obtained better scores for conditions with restaurant noise and noise in auto travel than that of white noises at + 10 dB SNR. Probably it can be speculated that subjects were able to use masking release to obtain better scores in these types of noises. Hearing aid worn by them was able to retain temporal modulations necessary for speech perception when restaurant noise was the masker noise.

At 0 dB SNR, the scores were poorer overall when compared to that of white noise. The results for tasks with restaurant and travel in auto may be attributed to effect of masking release and therefore may be concluded that hearing impaired are better able to understand conversations in these situations at least. +10 dB SNR probably represent SNR levels in real life and therefore the scores of the test may represent subjects experience in real life somewhat. Scores of 0 dB SNR were poorer than 50 % scores for all types of real world noise. Relative unfamiliarity of stimulus may also have added to the score differences seen. Studies with meaningful words or sentences may better be able to show the real world difficulties experienced by the adults with hearing impairment. This study may be replicated with such speech materials rather than consonants.

Conclusion

Auditory ecology is informative and helps to know the acoustical world that an individual with hearing impairmentlives in and how our intervention shapes it. Speech perception is a complex auditory-cognitive phenomenon and hearing impairment affects it in more than one aspect. Though our understanding the processes and many advances in technology have helped us in this area, there is still more learning to take place. The study showed that the scores of correct identification of consonants were statistically different for each type of real world noise used. Overall the scores were a lot better for only restaurant noise and noise of travel in auto. The benefit of hearing aids in real world is a variable and differs from situation to situation. The scores were better for +10 dB SNR than that of 0 dB SNR, as would be expected. As noise level increased, hearing aid was not able to improve consonant perception in a significant way. This was true for all types of real world noise.

Limitation of the study was that, only small number of subjects was included and sensorineural hearing losses of severe / profound degree were not included. Replication of the study with a larger number and gender wise comparison may add to the understanding of speech perception better. Most people found it difficult to identify the words, they were substituting meaningful words for the non sense words presented. Therefore a meaningful words or sentences may be a better choice as stimulus material. The overall scores then may reflect real world performance to speech perception among adults with hearing impairments.

Future research may concentrate on knowing the relative advantages or accuracy of sound classification of automatic amplification devices. This study may motivate researchers to look into data logging features of hearing aids and the information it provides to audiologist in facilitating better programming methods. Studies may also focus on developing a standardized list of sounds that are essential to hearing impairedin each of listening situations of their daily life and how much amplification devices were effective in maintaining of audibility of them. Comparison of acoustic analysis of real world noise and output of hearing aid would also be a necessary step in future. This will help us verifying the effect of technology in reducing most or part of noises exposed to hearing impaired individual.

References

- Gatehouse S, Elberling C, Naylor G (1999) Aspects of auditory ecology and psychoacoustic function as determinants of benefits from and candidature for non-linear processing in hearing aids. Danavox Symposium.

- Cherry EC (1953) Some experiments on the recognition of speech, with one and two ears. Journal of the Acoustical Society of America 25: 975-979.

- Yost WA (1997) The cocktail party problem: Forty years later. In R Gilkey, T Anderson (Eds.), Binaural and spatial hearing in real and virtual environments, Ahwah, NJ: Erlbaum, pp. 329-348.

- Nábĕlek AK, Letowski TR, Tucker FM (1989) Reverberant overlapand self-masking in consonant identification. J Acoust Soc Am 86(4): 1259-1265.

- Eisenberg LS, Shannon RV, Martinez AS, Wygonski J, Boothroyd A (2000) Speech recognition with reduced spectral cues as a function of age. J Acoust Soc Am 107(5 Pt 1): 2704-2710.

- Sreelekha Dudekula (2014) Effect of real world noise on speech understanding in individuals with hearing impairment. Unpublished Master’s Dissertation. Bangalore University, India.

- http://www.fon.hum.uva.nl/paul/praat.html.

- Cruckley J, Scollie S, Parsa V (2011) An Exploration of Non-Quiet Listening at School. Journal of Educational Audiology 17: 23-35.

- Howard-Jones PA, Rosen S (1993) Uncomodulated glimpsing in “checkerboard” noise J Acoust Soc Am 93(5): 2915-2922.

- George EL, Festen JM, Houtgast T (2006) Factors affecting masking release for speech in modulated noise for normal-hearing and hearingimpaired listeners. Journal of Acoustical Society of America 120(4): 2295-2311.