Some Developments in Clustering Analysis on Stochastic Processes

Qidi Peng1, Nan Rao1* and Ran Zhao2

1 Institute of Mathematical Sciences, Claremont Graduate University, USA

2Drucker School of Management and Institute of Mathematical Sciences, Claremont Graduate University, USA

Submission: October 17, 2018; Published: April 12, 2019

*Corresponding author: Nan Rao, Institute of Mathematical Sciences, Claremont Graduate University. Address: 1237 N. Dartmouth Ave. Claremont, CA 91711, USA

How to cite this article: Qidi Peng, Nan Rao, Ran Zhao. Some Developments in Clustering Analysis on Stochastic Processes. Biostat Biometrics Open Acc J. 2019; 9(3): 555764. DOI: 10.19080/BBOAJ.2019.09.555764

Abstract

We review some developments on clustering stochastic processes and come with the conclusion that asymptotically consistent clustering algorithms can be obtained when the processes are ergodic and the dissimilarity measure satisfies the triangle inequality. Examples are provided when the processes are distribution ergodic, covariance ergodic and locally asymptotically self-similar, respectively.

Keywords: stochastic process, unsupervised clustering, stationary ergodic processes, local asymptotic self-similarity

Abbrevations: fBm: Fractional Brownian Motion; mBm: Multifractional Brownian Motion

Introduction

Stochastic process is an infinite sequence of random variables indexed by “time”. The time indexes can be either discrete or continuous. Stochastic process type data have been broadly explored in biological and medical research [1-4]. Unsupervised learning on stochastic processes (or time series) has increasingly attracted people from various areas of research and practice. Among the above unsupervised learning problems, there is one subject, called clustering analysis, aims to discover patterns of stochastic process type data. There is a rich literature in bioinformatics, biostatistics and genetics on clustering stochastic process type data. We refer the readers to the review of Aghabozorgi et al. [5] for updates of clustering analysis on stochastic processes till 2015. Recently Khaleghi et al. [6-8] obtained asymptotically consistent algorithms for clustering distribution stationary ergodic processes, in the case where the correct number of clusters is known or unknown. In their framework, the key idea is to define a proper dissimilarity measured between any 2 observed processes, which characterizes the features of stationarity and ergodicity. Further Peng et al. [9,10] derived consistent algorithms for clustering covariance stationary ergodic processes and locally asymptotically self-similar processes.

In this framework we review the recent developments in clustering analysis on the following 3 types of stochastic processes:

• Type (1) distribution stationary ergodic processes;

• Type (2) covariance stationary ergodic processes;

• Type (3) locally asymptotically self-similar processes.

According to the nature of each type of processes, the ground-truths in the 3 clustering problems are defined differently. In the ground-truth of Type (1), two processes of identical process distributions are in one cluster; in the ground-truth of Type (2), two processes having the same means and covariance structures are in one cluster; for the third type, the pattern is the means and covariance structures of the tangent processes.

From the summary we conclude that a sufficient condition for the clustering algorithms (provided below) being consistent, is that the corresponding dissimilarity measure and its estimates satisfy the triangle inequality and its estimator are consistent (they converge to the theoretical dissimilarity as the path length goes up to the infinity).

Asymptotically consistent algorithms

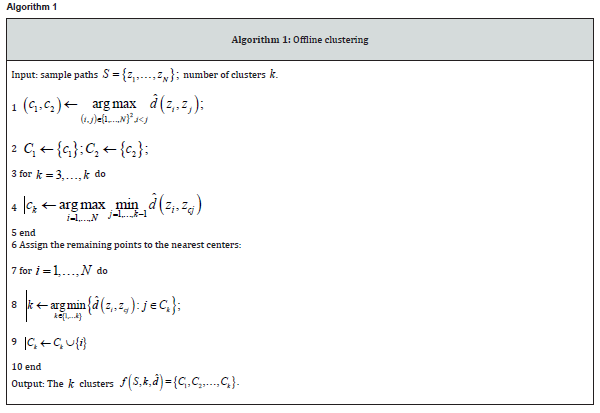

In [8], assuming the correct number of clusters k is known, two types of datasets are considered in the clustering analysis: offline dataset and online dataset. In the offline dataset, the number of sample paths and the length of each sample path don’t vary with respect time. However, in the offline dataset, both can vary. In [8] for each type of datasets, by using a particular dissimilarity measure, asymptotically consistent algorithms (Algorithm 1 for offline dataset and Algorithm 2 for online dataset) have been derived, aiming to cluster distribution stationary ergodic processes. Here asymptotic consistency means the output clusters from the algorithm converge to the ground-truths either in probability (weak sense) or almost surely (strong sense). Based on Khaleghi et al. [6-8] approaches, Peng et al. [9,10] suggested asymptotically consistent algorithms that are valid for a more general class of processes and dissimilarity measures.

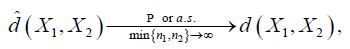

Let 12,XX be one of the 3 types of processes in the above section. We denote by ()12,dXX a dissimilarity measure between 2 stochastic processes 12,,XX which satisfies the triangle inequality. And we denote by ()12ˆ,dXX the estimate of ()12,,dXX where for 1,2,i= ()()()1,,iiinixxx= is an observed sample path of the process ,iX with length .in Moreover, assume that ˆd also verifies the triangle inequality and it is consistent: for all 12,,xx sampled from 12,XX respectively,

Where Ρ→ and .as→ denote the convergence in probability and almost sure convergence, respectively.

The clustering algorithms suggested by Peng et al. [9, 10] are given below (Algorithms 1).

Algorithm 1

Theorem 2.1: Algorithms 1 and 2 are asymptotically consistent for the processes of Types (1) and (2) respectively, provided that the correct number k of clusters is known, and the sample dissimilarity measure ˆd is consistent and both ˆd and d satisfy the triangle inequalities.

Proof: The consistency of Algorithms 1 and 2 applied for clustering processes of Type (1) is proved in [8]; the consistency of the two algorithms applied for clustering processes of Type (2) in proved in [10]. It is worth noting that in the above proof, the key features used are the fact that both d and ˆd verify the triangle inequalities and ˆd is a consistent estimator of .d

For clustering the processes of Type (3), since additional assumption is needed, thus we will introduce it in Section 4. For clustering the processes of Type (1), the specific form of d and ˆd are given in [8]. Then we mainly introduce the other 2 pairs of ()ˆ,dd for clustering analysis on the processes of Types (2) and (3).

Dissimilarity measure for covariance stationary ergodic processes

The definition of covariance stationary ergodic process is given below (Algorithm 2).

Definition 3.1: A stochastic process {Xt}t∈N is covariance stationary ergodic

if:

• its mean and covariance are invariant subject to any time shift;

• any of its sample autocovariance converges in probability to the theoretical autocovariance function as the sample length goes to .+

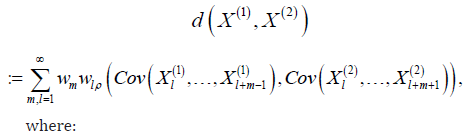

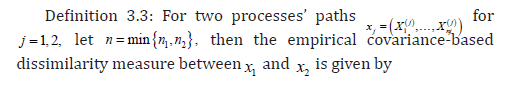

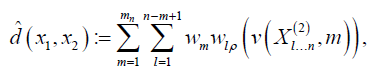

The dissimilarity measure d and its sample estimate ˆd suggested in Peng et al. [10] to measure the distance between 2 covariance stationary ergodic processes are given below:

Definition 3.2: The dissimilarity measure d between a pair of covariance stationary ergodic processes X(1),(2) is defined as follows:

where:

• The sequence of positive weights {}jw is chosen such that dX(1),(2) is finite.

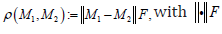

• The distance ρ between 2 equal-sized covariance matrices 1,M 2M is defined to be

where:

• nm chosen to be (),on denotes the size of covariance matrices considered in the estimator.

Dissimilarity measure for locally asymptotically self-similar processes

In this section we review the work on clustering processes of Type (3). Locally asymptotically self-similar processes play a key role in the study of fractal geometry and wavelet analysis. They are generally not covariance stationary, however, one can still apply the dissimilarity measured introduced in Section 3 under some assumption (see [9]).

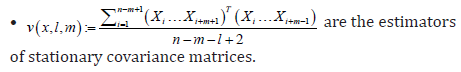

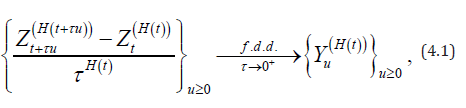

The following definition of locally asymptotically self-similar process is given in [11].

Definition 4.1: A continuous-time stochastic process ()(){}0HtttZ≥ with its index ()H⋅ being a continuous function valued in ()0,1, is called locally asymptotically self-similar, if for each 0,t≥ there exists a non-degenerate self-similar process ()(){}0HtuuY≥ with self-similarity index (),Ht such that

where the convergence ...fdd→ is in the sense of all the finite dimensional distributions.

Here ()(){}HtuuY is called the tangent process of ()(){}HtttZat t (see [12]). Throughout [9] it is assumed that the datasets are sampled from a known number of processes satisfying the following condition:

• Assumption (A): The processes are locally asymptotically self-similar with distinct functional indexes ();H⋅ their tangent processes increment processes are covariance stationary ergodic.

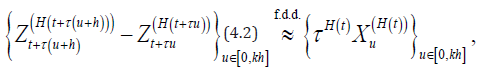

Then from (4.1), Peng et al. [9] showed the following: under Assumption (A), for τ being sufficiently small,

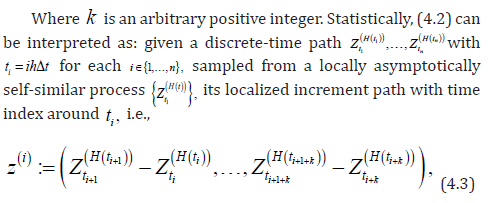

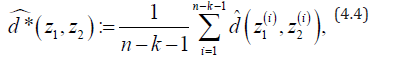

is approximately distributed as a covariance stationary ergodic increment process of the self-similar process ()()(){}[]0,.iiHtHtuukhtX∈Δ This fact inspires one to define the sample dissimilarity measure between two paths of locally asymptotically self-similar processes 1z and 2z as below:

Accordingly, the consistency of Algorithms 1 and 2 can be expressed in the following way:

Theorem 4.2: Under Assumption (A), Algorithms 1 and 2 are approximately asymptotically consistent, if

d is replaced with *.

In Theorem 4.2, “approximately” is in the sense of Eq. (4.2).

Simulation Study

In the frameworks of khaleghi et al. [8], Peng et al. [9] and Peng et al. [10], simulation study are provided. In [8], a distribution stationary ergodic process is simulated based on random walk; in [9] the increment process of fractional Brownian motion [13], is picked as an example of covariance stationary ergodic process; in [9] , simulation study is performed on the so-called multifractional Brownian motion [14], which is an excellent example of locally asymptotically self-similar process. The simulation study results for clustering distribution stationary ergodic processes are given in [8]. Here we summarize the results for clustering the processes of Types (2) and (3), from Peng et al. [9,10] respectively

Clustering processes of type (2): fractional brownian motion

Fractional Brownian motion (fBm) (){}0,HsBs≥ as a natural extension of the Brownian motion, was first introduced by Kolmogorov in 1940 and then popularized by Mandelbroit, et al. [15] and Taqqu [16]. The influences made by the fractional Brownian motion model have been on a great many fields such as biological science, physical sciences and economics (see [17]). As a stationary increment process, it is shown that the increment process of the fBm is covariance stationary ergodic (see [18,19]).

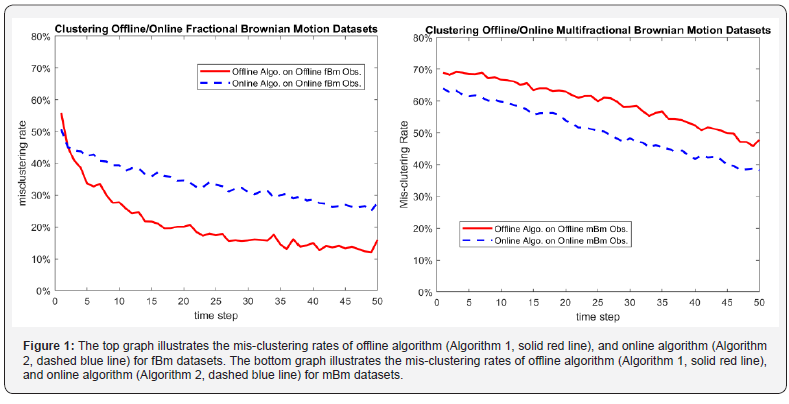

In [10], the clustering algorithms are performed on a dataset of 100 paths of fBms with 5k= clusters. In the sample dissimilarity measure the so-called log*-transformation is applied to increase the efficiency of the algorithms. One considers the mis-clustering rates to be the measure of fitting errors. The top figure in Figure 1 presents the comparison results of the offline and online algorithms, based on the behavior of mis-clustering rates as time increases. Both algorithms show to be asymptotically consistent as the mis-clustering rates decrease.

Clustering processes of type (3): multifractional brownian motion

Multifractional Brownian motion (mBm) ()(){}0,HttWt≥ as a natural generalization of the fBm, was introduced in [14,20]. Then it was quickly applied to describe phenomena in for instance molecular biology [21], biomedical engineering [22], and biophysics [23]. It can be obtained from [11] that the mBm is locally asymptotically self similar satisfying Assumption (A). The datasets of mBms for testing the 2 clustering algorithms are similar to those of fBms. The performance of the algorithms are shown in the bottom figure in Figure 1. Similar conclusion can be drawn that both offline and online algorithms are approximately asymptotically consistent.

References

- Doris Damian, Matej Orešič, Elwin V, Jacqueline Meulman, Jerome Friedman, et al. (2007) Applications of a new subspace clustering algorithm (COSA) in medical systems biology. Metabolomics 3(1): 69-77.

- Weizhong Zhao, Wen Zou, James J Chen (2014) Topic modeling for cluster analysis of large biological and medical datasets. BMC Bioinformatics 15(11): S11.

- Jääskinen V, Parkkinen V, Cheng L, Corander J (2014) Bayesian clustering of DNA sequences using markov chains and a stochastic partition model. Stat Appl Genet Mol Biol 13(1): 105-121.

- McDowell IC, Manandhar D, Vockley CM, Schmid AK, Reddy TE, et al. (2018) Clustering gene expression time series data using an infinite Gaussian process mixture model. PLoS Comput Biol 14(1): e1005896.

- Saeed Aghabozorgi, Ali Seyed Shirkhorshidi, Teh Ying Wah (2015) Time-series clustering - a decade review. Information Systems 53: 16-38.

- Azadeh Khaleghi, Daniil Ryabko (2012) Locating changes in highly dependent data with unknown number of change points. In Advances in Neural Information Processing Systems 3086-3094.

- Azadeh Khaleghi, Daniil Ryabko (2014) Asymptotically consistent estimation of the number of change points in highly dependent time series. In International Conference on Machine Learning 539-547.

- Azedeh Khaleghi, Daniil Ryabko, Jeremie Mari, Philippe Preux (2016) Consistent algorithms for clustering time series. Journal of Machine Learning Research 17(3): 1-32.

- Qidi Peng, Nan Rao, Ran Zhao (2018) Clustering analysis on locally asymptotically self-similar processes with known number of clusters 1: 1-25.

- Qidi Peng, Nan Rao, Ran Zhao (2018) Covariance-based dissimilarity measures applied to clustering wide-sense stationary ergodic processes. arXiv, 1801.09049v1.

- Brahim Boufoussi, Marco Dozzi, Raby Guerbaz (2008) Path properties of a class of locally asymptotically self-similar processes. Electronic Journal of Probability 13(29): 898-921.

- Kenneth Falconer (2002) Tangent fields and the local structure of random fields. Journal of Theoretical Probability 15(3): 731-750.

- AntoineAyache, Jacques Lévy Véhel (2004) On the identification of the pointwise Hölder exponent of the generalized multifractional Brownian motion. Stochastic Processes and their Applications 111(1): 119-156.

- Romain-Francois Peltier, Jacques Levy Vehel. Multifractional Brownian motion: definition and preliminary results. HAL 2645: 239-265.

- B Mandelbrot, J W van Ness (1986) Fractional Brownian motions, fractional noises and applications. SIAM Review 10(4): 422-437.

- Murad S Taqqu (2013) Benoît Mandelbrot and Fractional Brownian Motion. Statistical Science 28(1): 131-134.

- Höfling F, Franosch T (2013) Anomalous transport in the crowded world of biological cells. Rep Prog Phys 76(4): 046602.

- Maruyama G (1970) Infinitely divisible processes. Theory of Probability and its Applications 15(1): 1-22.

- Jakub Slezak (2017) Asymptotic behaviour of time averages for non-ergodic Gaussian processes. Annals of Physics 383: 285-311.

- Antoine Ayache, Serge Cohen, and J Levy-Vehel. The covariance structure of multifractional Brownian motion, with application to long range dependence. In Acoustics, Speech, and Signal Processing, 2000. ICASSP’00. Proceedings. 2000 IEEE International Conference 6: 3810-3813.

- Marquez-Lago TT, Leier A, Burrage K (2012) Anomalous diffusion and multifractional brownian motion: simulating molecular crowding and physical obstacles in systems biology. IET Systems Biology 6(4): 134-142.

- Buard B, Humeau A, Rousseau D, Chapeau-Blondeau F, Abrahamc P (2010) Pointwise Holder exponents of a model for skin laser doppler flowmetry signals based on six nonlinear coupled oscillators with linear and parametric couplings: Comparison with experimental data from young healthy subjects. IRBM 31: 175-181.

- Humeau A, Chapeau-Blondeau F, Rousseau D, Tartas M, Fromy B, et al. Multifractality in the peripheral cardiovascular system from pointwise Holder exponents of laser doppler flowmetry signals. Biophys J 93(12): L59–L61.