SYNTHESIS: A Platform for Generating Digital Cultural Heritage Assets and Creating Virtual or Physicals Tours Through Geocoding

Stelios C.A. Thomopoulos*, Giorgos Farazis, Chryssa Scordili, Korina Kassianou, Christina P. Thomopoulos and Dimitris Zacharakis

Integrated Systems Laboratory, Institute of Informatics and Telecommunications, National Center for Scientific Research “Demokritos”, Greece

Submission:June 3, 2020; Published:June 23, 2020

*Corresponding author:Stelios C.A. Thomopoulos, National Center of Scientific Research “Demokritos,” Institute of Informatics & Telecommunications, Integrated Systems Laboratory, P.O. Box 60037, 15310, Agia Paraskevi, Greece

How to cite this article:Stelios C. A.Thomopoulos, Giorgos Farazis, Chryssa Skordili, Korina Kassiani, Christina P. T.homopoulos, Dimitirs Zacharakis. SYNTHESIS: A Platform for Generating Digital Cultural Heritage Assets and Creating Virtual or Physicals Tours Through Geocoding. Glob J Arch & Anthropol. 2020; 11(4): 555816. DOI: 10.19080/GJAA.2020.11.555816

Abstract

The paper describes the SYNTHESIS platform that is being developed in the Integrated Systems Laboratory (ISL) in the context of the SYNTELESIS10 project, for generating, geocoding and navigating through digital Cultural Heritage (CH) assets in virtual as well as physical spaces. SYNTHESIS is a platform enabling the creation of interactive and educational 3D immersive environments of the highest quality, the georeferencing of digital assets in the virtual or physical space, and the navigation in the geocoded digital assets space in the form of either a virtual or physical depending on whether the digital assets are geocoded in the virtual space using a relative coordinate georeferencing system, or in the physical space using an absolute coordinate georeferencing system space as in the Phaistos Palace use case in this paper.

Keywords:Cultural heritage; Geocoding; Physical space; Virtual environment; Digital assets; Archeology; Architecture

Introduction

SYNTHESIS is a platform enabling the creation of interactive and educational 3D immersive environments of the highest quality, the georeferencing of digital assets in the virtual or physical space, and the navigation in the geocoded digital assets space, either in the form of a virtual tour in a virtual space or physical one depending on the space the digital assets toured are georeferenced to.

The SYNTHESIS platform consists of three main components:

a) a set of tools that allow the creation of the 3D virtual space and the associated digital assets;

b) the Waygoo platform for georeferencing 3D/2D virtual spaces and assets in absolute or relative coordinate systems; and

c) the Waygoo mobile application to experience the virtual tours using iOS and Android smart phones.

Browsing a 3D virtual environment

With the use of Virtual Reality (VR) technologies, the experience of the end user is enriched as a “digital” visitor in places and monuments of cultural and touristic interest. Through a detailed three-dimensional representation/reconstruction of the CH infrastructure, the visitors are guided and navigated in the digital twin of the CH monument and/or in the space of the digital CH assets with no spatial or temporal constraints. The three-dimensional (3D) digital representations / reconstructions of CH monuments and assets allow the visitor to either browse the space in its current state, or in a three-dimensional model that presents the space reconstructed based on valid data. The virtual space that is constructed seeks to create a digital cultural reference point for the expansion and transmission of cultural elements and infrastructures to a wider audience based on age criteria and preferences. It combines entertainment and education in an accessible and fun way for wide audiences while developing at the same time an innovative detailed browsing tool. Browsing high-quality digital environments can be achieved either through virtual reality (VR) in the virtual space or through augmented reality (AR) in the physical scape. Both ways are supported by the SYNTHESIS platform.

The platform includes:

a) a back-end subsystem on Unity platform or related technology to create the 3D models;

b) a front-end subsystem that includes a desktop browser interface for geotagging and georeferencing 3D models and digital assets; and

c) desktop game applications and portable devices, including smart phones, for VR/AR tours in the virtual and/or physical space experiencing the digital cultural heritage (CH) assets. The aim of SYNTHESIS is to upgrade the user experience as a digital visitor to cultural sites and monuments. The user has the option of a personalized alternative tour of the spaces he chooses, which includes narratives, animations and educational interactive games.

Next, we describe some of the key components and features of the SYNTHESIS Platform.

The iGuide 3D environment for 3D modeling and serious game development

Interpretation based on historical data (theoretical support), construction analysis and creation of modules

The development of a guide application for an archeological site enables the development of a browsing experience in its digital version, with the aim of expanding the visitor’s perception and experience and enabling the returning of the visitor to the original CH environment with an enriched look. The challenges that exist are many from the beginning and concern the understanding and communication of the space and the CH findings therein, through the presentation and highlighting of the multiple interpretations given by the research (archeological, architectural, theoretical). Virtual worlds have already been used in the past to present and represent cultural heritage sites with the intention of making a greater cultural impact on an expanded audience. Cultural heritage is a public good in the sense that it belongs to all who have the right to its knowledge and the obligation to protect it. We found that archeology, trying to answer the uncertainty about what the world was like in the past, has as its guide and uses documentation. Realistic representations tend to form a certainty about the same question, freezing the image over time and giving the impression that it was, so caution should be exercised on how these reconstructions are presented. Starting the research process, there was a need to avoid the reproduction of certainties and to try to cite interpretations that give room for doubt and criticism. The problem we initially encountered was the uniqueness of the layout of the spaces in the relief. For example, the archeological site of the remains of a Minoan city in Crete, Greece, is characterized by the complexity of the spaces and routes. According to Klairi Palyvou, an emeritus professor of architectural history at the Aristotle University of Thessaloniki, “the main features of the fundamental trends of what we call “architectural tradition of Crete or the South Aegean” have already revealed the way of building an urban formation from the Neolithic houses under the urban complex and known as “Palace” of Knossos” [1].

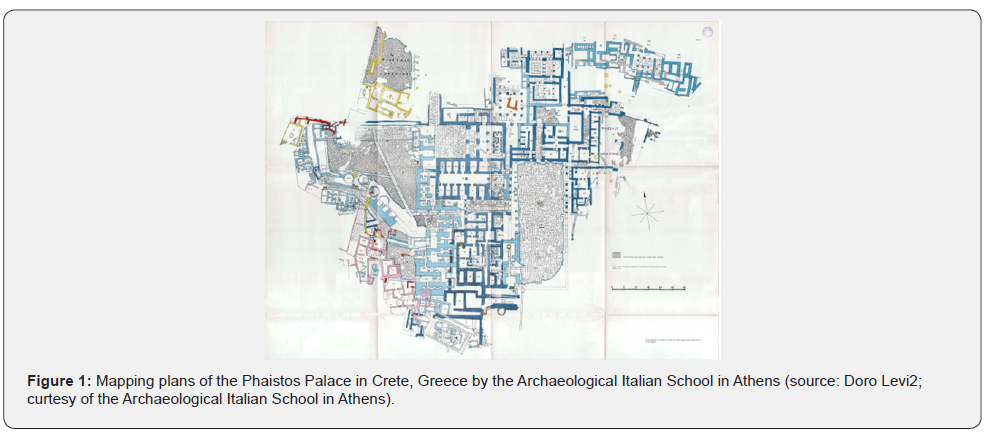

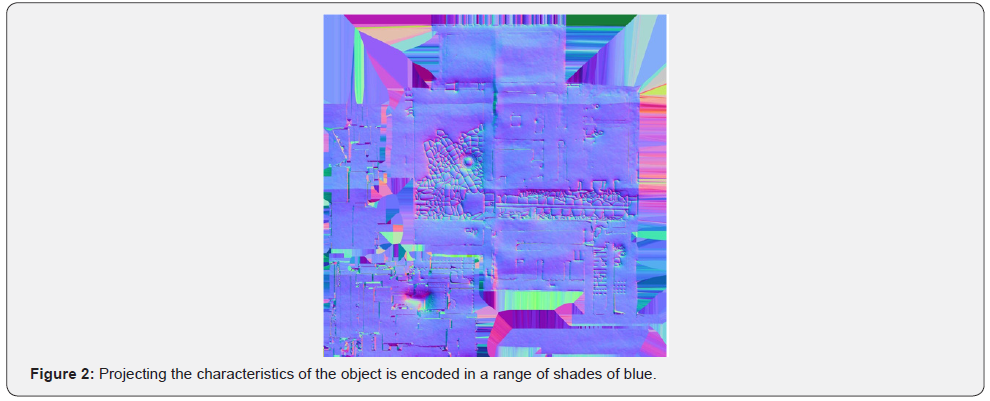

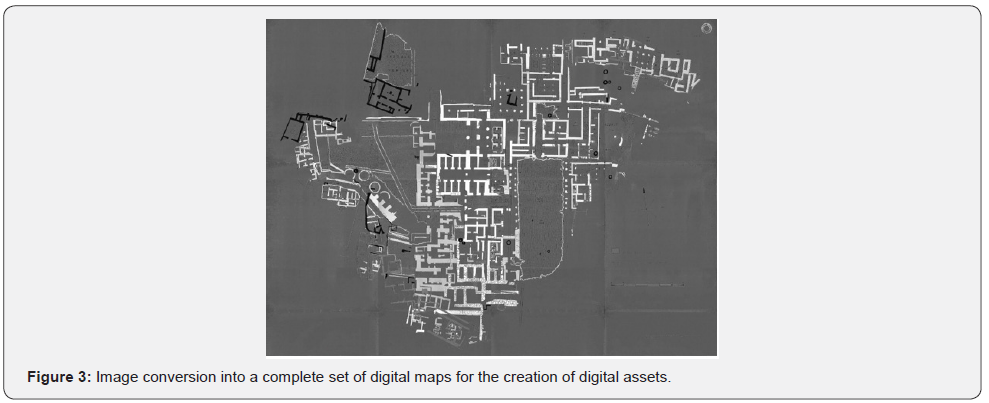

Initially, the search for published material for research use, made available to the process the series of mapping plans of the Archaeological Italian School in Athens [2] (Figure 1). As for this stage of collecting the plans of the architectural remains, in several cases, there are not enough elements of the architectural and structural elements that have been lost or destroyed, either for the whole building or for important construction details. Modeling methods based on the image can produce one variety for the computational acquisition of structural elements, in order to digitally recreate all the gaps that are missing in the ruined environment, as far as possible. The mapping of the relief (Figure 2) achieved by projecting the characteristics of the object is encoded in a range of shades of blue, where each tone decodes a different altitude scale. The display of the features is achieved by using image conversion software in a complete set of maps to create material (Bitmap2Material). Through the mapping of the relief we can digitally place all the elements that are at ground level at a height that we have an indication that the floor is finished (Figure 3).

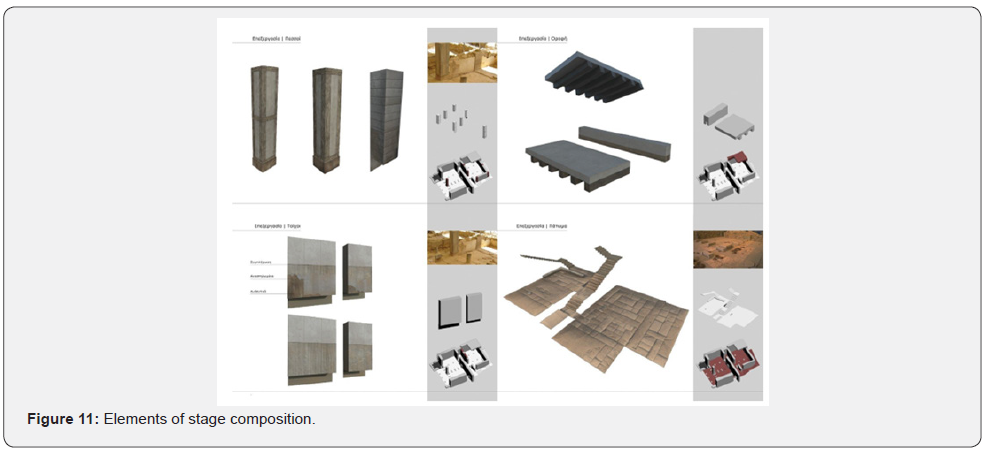

The display of the features is achieved through the use of software that allows image conversion into a complete set of digital maps for the creation of digital assets (Bitmap2Material). Through the mapping of the relief we can digitally place all the elements that are at ground level at a height for which we have an indication of where the floor ends (Figure 3). Another aspect that is taken into account is the research on the structural role of wood in the construction system of the Minoan world [3,4]. The wood was one fundamental element for the construction as a kind of anti-seismic mechanism for strengthening the masonry. It seems that wood was the main building block and it is important to look at the graphic reconstruction. Then there was the systematic attempt to produce a typology of design elements from the whole three-dimensional model. The preparation for the creation of a parametrically designed environment consisting of typological elements. It can be difficult to create original typological elements from a specific archaeological site environment, but it requires a digital approach as a system of structural elements that have a substandard standardization. The end result of the composition of the elements creates a general sense of space, but not necessarily a “complete” reality as a whole. The data is modeled with a 3D object playback program, such as Blender 3D or 3ds Max [5,6].

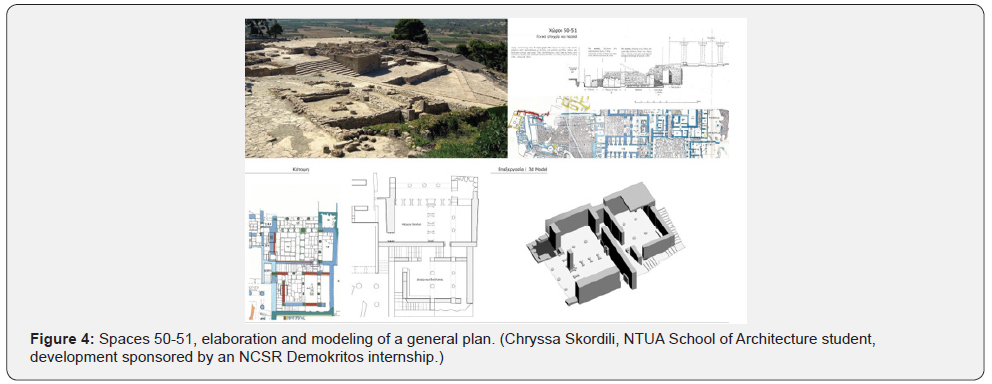

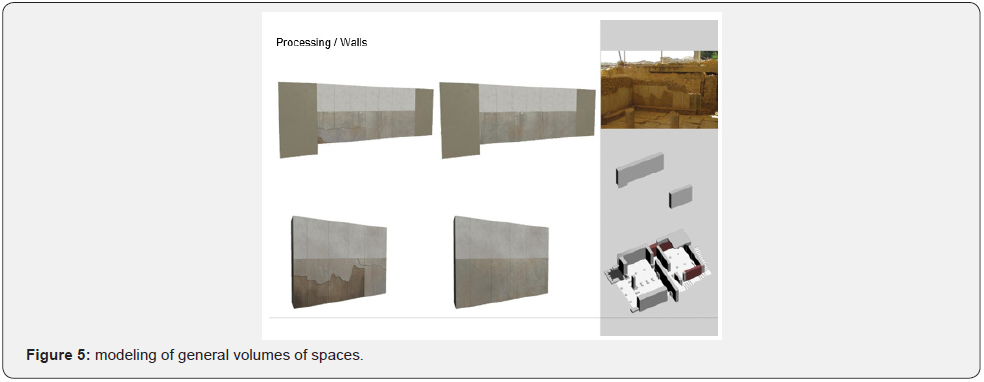

ub heading3D and detailed modeling of the selected areas of interest with high performance of aesthetics and spatial qualities (Figure 5 & 6)

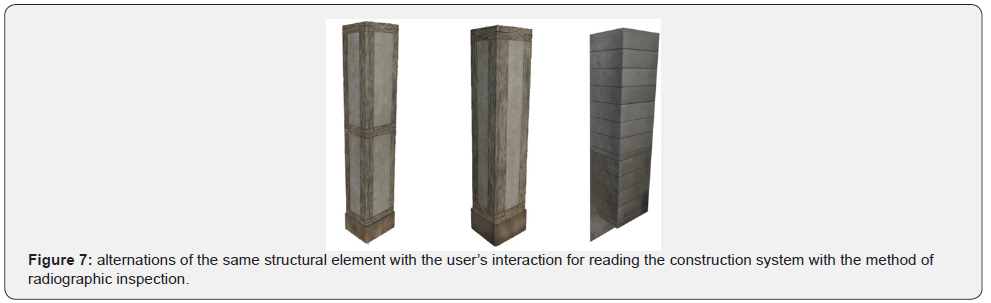

A variety of interpretations that try to approach the original hypothesis about the form and shape of structural elements (walls, columns, etc.) are taken into account in our methodology. For example, we can see in the gaps in the walls the previous existence of wood [4]. Because the excavation process can sometimes cause inevitable “destruction” [4], digital media can enhance the virtual restoration process. or restructuring. Computer graphics systems, through the appropriate programs, can restore damaged parts, creating three-dimensional models of the artificial object in its original form (Figure 7). Digital sculpture is especially useful for constructing small and organic details on surfaces. By constructing standard structural elements such as beams, planks, tiles, etc. that have unique sides, we created a set of tools that can be used to assemble all kinds of spaces (such as walls, floors, etc.). The design of such elements is done with the help of digital sculpture programs such as Zbrush.

During this step, the goal is to develop a group of models each with two or three levels of detail (Level of Detail, LOD). The optimization process takes place in the difficulty of handling highdetail geometries in their analysis in geometric polygons. To avoid this, the size of the detail is adjusted so that it depends on the distance of the camera, ie the player. The greater the distance from the geometry grid the lower may be the level of detail of its analysis in polygons. An alternation of the three levels of geometry is performed automatically depending on the distance that the user-player (camera) has from the object. This ensures optimal system performance as it does not need to recall all 3D models in high detail, but only those that are close to the player and the rest in their two lower resolutions.

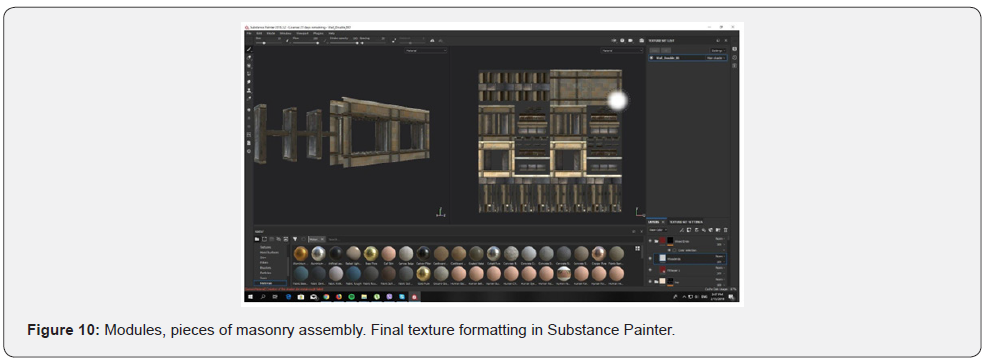

With the technique of projecting features of the highresolution model to its low-resolution counterpart, as shown in Figure 12, we get the same result visually, replacing the highresolution model with the sense that maps betray. The elements that have multiple texture maps and one of these maps is called a normal map. This is a computer graphics technique that simulates large details and strokes using an image (bitmap). Real-time performance in video games is limited by performance, generally by the number of polygons that can appear simultaneously on the screen. For this reason, maps are useful because they can add more detail to 3D models that have a relatively low number of polygons. The process of obtaining maps, also called baking, requires two steps. One of the creation of a simplified (decimated) model based on the original high resolution and then an unfolding of the reference of its polygons so that they can see a map on which the texture of the material can be painted. The decimation grid is simplified before the object is entered into the Substance Painter software in the form of an FBX or Obj file, according to its accompanying material application file (Mtl). An editing process and decimate process was implemented using Zbrush (software) and using special digital geometry processing (Meshlab, Autodesk Mesh Mixer).

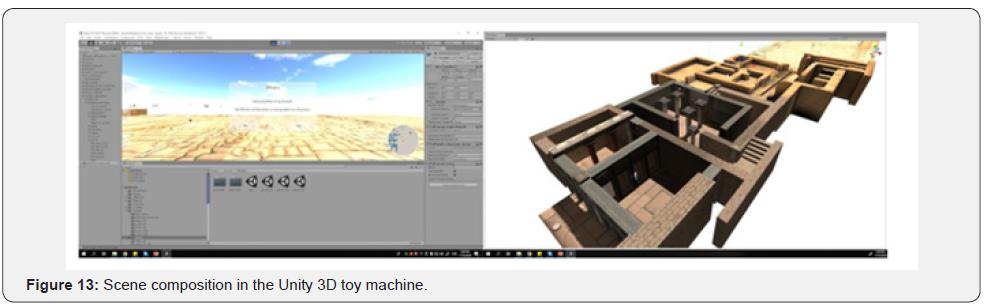

The texture application in the 3D model is based on the images taken from the photometry if it exists or in the photographic documentation and through the parameter designer (Substance Designer, www.allergorithmic.com), a node-based system flow. we produce a parametric material to provide and control all the information of the final texture in a flexible way, improving the cost of performance in memory. The texture of the material is realized in two stages. The first creates a palette of parametric materials designed on a PBR authoring tool development platform such as Substance Designer. Then for each digital model the selected material is applied, while any part of it is painted using digital 3d Painting programs such as Substance Painter (Figure 8 & 9). The final step is to import the sections into FBX file formats from the modeling program to a gaming machine such as Unity or Unreal and to compose and develop a digital environment. To create a detailed 3D model, the reconstructed grid was built and then processed to be simplified into simple closed geometry, ready to be inserted into the 3D base. After being introduced to 3D geometry (3ds Max, Blender 3D), each section is placed in its exact coordinates and requires further editing and composition to produce the final overall structured environment on the gaming machine (Unity 3D).

Creation of a three-dimensional virtual tour interface Desktop/VR mode

Virtual browsing can be done in two ways, in Virtual Reality using Oculus Rift virtual reality equipment or on a desktop mode without the need for any special equipment. In both cases there is an interaction between the user and the browser. This interface allows the user to interact with three-dimensional objects in the space, gain access to browsing settings (e.g. interactive map) and activate audiovisual content in areas of interest to the space. In VR Mode, the interaction with the environment and the graphical user interface (UI) is based on what the user is looking at and an XBOX type controller is required. The user shakes his head, moves a cursor that is activated when he is over an object with which he can interact (e.g. exhibit, point of interest, button on the UI) and then, by pressing the appropriate button on the controller, performs the interaction.

In Desktop Mode, the interaction is with the computer mouse, selecting the interaction objects.

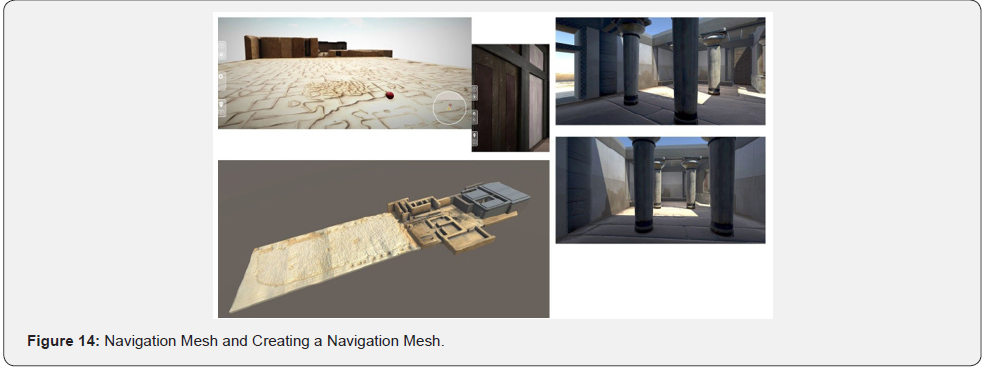

The NavMesh technology of the Unity 3D graphics engine is used to chart the route as well as to automatically navigate from point to point (Figure 10).

a) User route design

The interactive map included in the virtual tour allows the user to design their own route by selecting the points of interest that they want to visit. Once the points are selected, the system calculates the shortest path from the user’s starting point and which goes through all the points of interest and then draws it on the map so that the user can follow it. The order of selection of points of interest does not matter, as the system will sort them so as to minimize the length of the route. Conversely, if the user chooses to follow the default path, they will visit some points of interest in a predefined order so that the browser will tell a specific story with a beginning and an end.

b) Teleportation to places in the area

The third function of the interactive map allows the user to “teleport” directly to points of interest without having to navigate and find them in the space.

c) Visual navigation aids that mark points of interest/p>

The places where the user can find information in the space are marked with markings (arrows, icons), and with bright surfaces. All signs are two-dimensional surfaces in three-dimensional space in the form of visual effects development. There are up or down flow arrows or indicator arrows for the presence of average image, sound and text.

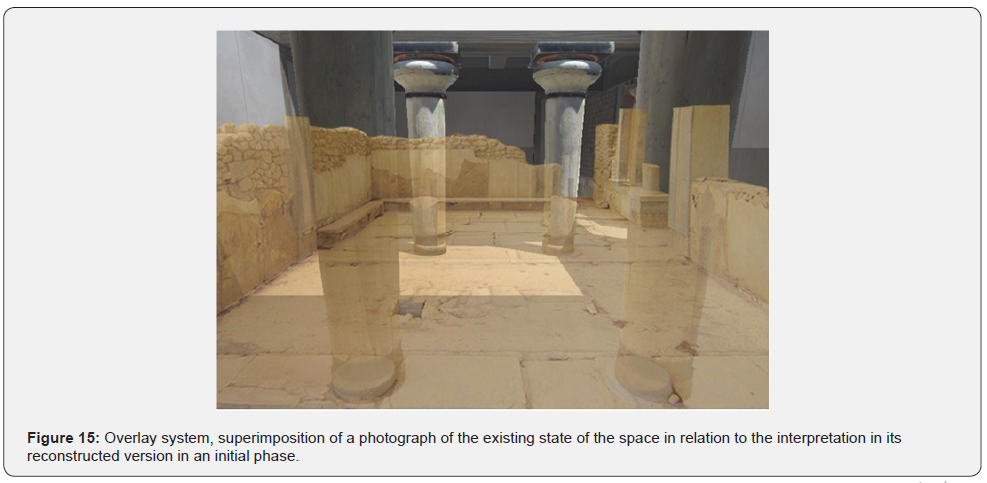

d) Integration of specially processed images in specific points, which offer the possibility of comparing digital reconstruction with the current situation of the archeological site (image overlay).

At the points of interest with the indication of the flow arrow up or down, the two-dimensional image superposition in the three-dimensional space is activated. In (Figure 11), the photo of the existing condition of the archeological site at the point where the interpretation of the site has been reconstructed, is overlaid on the reconstruction allowing viewers to juxtapose the reconstructed interpretation with the existing condition.

e) Hotspots

At the points where information is given, a range of influence develops around each point where the ability to interact is activated when the player-user passes through it. Overwriting and audio files can be supported for playback of recorded narration.

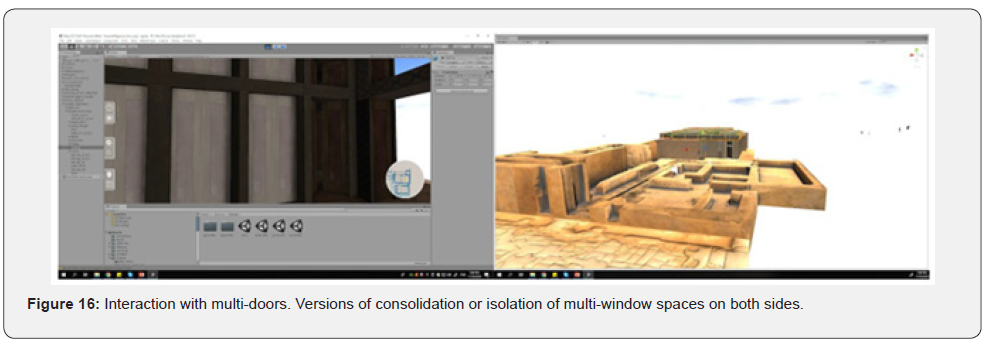

f) Interaction with objects

The interaction in space is achieved with points of interest with the possibility of alternating a geometry of the object or its texture.

g) Narration and texts

After bibliographic research, we created thematic sections (indicatively some of them are: reconstruction / reconstruction, art and architecture, linguistic constructions) under which we write texts of 150-400 words that exist in places of interaction of the space, but also recorded (Greek and English). The texts are translated into other languages (Arabic, Farsi) for accessibility by more language groups, but also for special laboratories that promote multilingualism, where principles and diversity are applied and create space for archeology to be discussed from multiple perspectives and participants’ experiences.

h) View explanatory animation

Based on the bibliographic research, a series of short informative texts for iGuide was created, both in text form and in audio or animation form (in Greek and English). This material is placed throughout the 3D space, helping users to gain knowledge of the various findings and elements of the reconstruction and the interpretations surrounding it.

i) Presentation with a choice of time in the course of the day

The user has the ability to set the time of day in the driver due to the development of the code in the Unity machine that allows the movement of the light source that represents the sun in a trajectory simulating the movement of the sun, while changing the intensity of the light source, (Figure 13).

j) Language selection

The development of the guide for choosing a browser language is a way to appeal to a global audience.

k) iGuide System Components

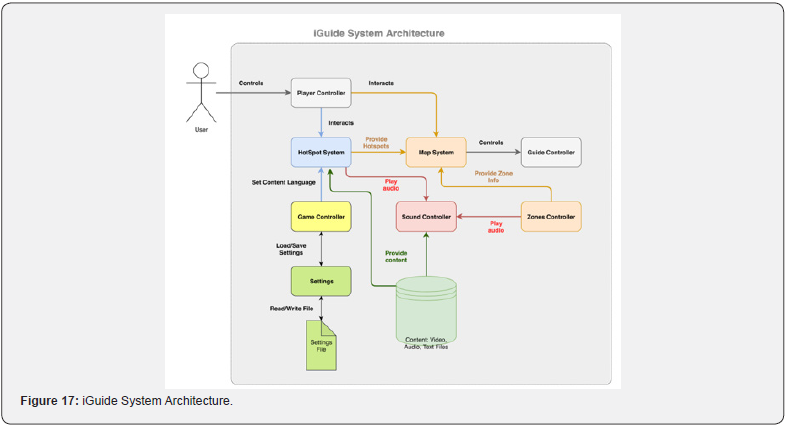

System architecture

The iGuide application system consists of individual subsystems that control a different part of the virtual browser. All subsystems are implemented in the Unity3D gaming machine and are written in C # programming language. The subsystems as well as the communication between them are presented in the following architectural diagram of the system.

Settings

This subsystem implements application settings and allows the user to change the language and adjust the graphics quality and volume. The settings are stored in a file and used by the Game Controller to configure the environment and the contents of the browser.

Game controller

This subsystem is the first to start when loading the virtual tour scene and undertakes to initialize the environment where the browsing takes place.

The subsystem reads the settings made by the user such as e.g. language, graphics and audio settings and applies them to the browser environment. The change of settings can be made at any time during the browser and not just at the beginning.

Settings

This subsystem implements application settings and allows the user to change the language and adjust the graphics quality and volume. The settings are stored in a file and used by the Game Controller to configure the environment and the contents of the browser.

Game controller

This subsystem is the first to start when loading the virtual tour scene and undertakes to initialize the environment where the browsing takes place.

The subsystem reads the settings made by the user such as e.g. language, graphics and audio settings and applies them to the browser environment. The change of settings can be made at any time during the browser and not just at the beginning.

Player controller

This subsystem implements the user’s navigation in the virtual space in the first person. It simulates a person’s movement and includes a perspective camera that plays the role of the user’s eyes. The subsystem receives the input from various devices (keyboard, mouse, Xbox Controller, Oculus Rift headset) and based on this moves the “character” of the user in the virtual space. The functions of the Player Controller include:

a) Move the character (Walk / Run) with the WASD keys on the keyboard or the directional levers of the Xbox Controller.

b) Turn the character with the mouse or gyroscope of the Oculus Rift headset.

c) Jump character with the Spacebar key on the keyboard or with the Xbox controller button.

d) Interaction with points of interest and objects of the space by clicking on them or by pressing the Xbox controller button.

Controller guide

This subsystem takes care of navigating the virtual guide / tour guide. The guide is only navigated when there is an active navigation path and its purpose is to guide and help the user navigate the virtual space. When a browser path is selected, the Guide Controller receives the calculated path from the Map System and then uses the Unity NavMesh system to follow this path. Each time the driver reaches a point of interest he stops and waits for a command from the user so that he can move on to the next point.

Hotspot system

HotSpot System implements all the interactive points of interest that exist within the virtual and are encountered during tours. The points of interest offer the user audiovisual content (video, recorded narration, text) to inform him about a specific topic and enable the interaction with objects in the space. Interest points are activated and are only available when the user approaches them through the Player Controller.

The Hotspot System displays the content in the language selected by the user in the application settings.

Map system

This subsystem implements all the functions related to the interactive map of the browser and the creation of routes. Map System communicates with the Player Controller, Guide Controller and Hotspot System so that it displays the user’s, driver’s and interest points on the map. Utilizes Unity’s NavMesh system to calculate the paths between points of interest. The functions of the subsystem are:

a) Top view of the entire space where the tour takes place. This operation is performed using an orthographic projection camera that looks at the entire space from above. The camera’s image is rendered in a texture that appears in the user’s graphical interface when he chooses to see the interactive map.

b) The mini-map, which is a small map that shows only the immediate area around the user as he navigates the space. It is implemented in the same way as the large map, only the mini-map is visible continuously in the graphical user interface.

c) Default browser path. It includes a default path that navigates the user from specific points of interest in a specific order to tell a story. The system communicates with the Hot Spot System and asks for all the points of interest that belong to the default route, calculates the route that passes through them, draws it on the map and then teleporters the Player Controller to the starting point of the route.

d) User route design. This feature allows the user to select the points of interest he wants to visit and thus design his own route. Map System will calculate the shortest route that passes through these points with the starting point the position of the Player Controller when the route was designed.

e) Teleportation at points of interest. He carries Teleportation at points of interest. Transfer the Player Controller to the selected point of interest without navigation.

f) Free or automatic browsing. When the Map System calculates and draws the path on the map, it also gives the path to the Guide Controller subsystem which navigates the browser guide / guide. In the case of free browsing the user through the Player Controller must navigate himself and follow the guide. In contrast, in automatic browsing, the Player Controller automatically follows the driver without user intervention.

>Zones Controller

The function of this subsystem is to detect when the user enters certain spaces and to automatically start, through the Sound Controller, an audio narration / description of the specific space. It also includes information about the name of the space that appears on the mini-map so that the user knows where it enters.

Sound Controller

The Sound Controller undertakes to play and configure anything related to audio content. Its functions are:

a) Play ambient sounds in the virtual space so that it looks more “alive”.

b) Play audio effects (eg walking steps) for greater realism.

c) Reproduction of stories in points of interest when the user selects them.

d) Reproduction of narratives in places where the user enters.

e) Mix sound and fade in / out between ambient sounds and narratives.

f) View subtitles if it has been selected in the application settings.

3D and detailed animated character modeling related to the selected space

A number of three-dimensional models-characters are developed to animate the space. The characters depict forms of the Bronze Age as they are depicted in the murals and give a fantasy dimension. The development of the characters will be done at the level of model design with software creating 3D models and movement (Figure 14).

Animation of the space with characters

The characters that are created animate the space through a circular flow of their movement in it in a continuous repetition. Thus, there is a supervision of the possibility of the routes but also the perception of the scale of the space through its reading based on the height of the characters. Animation can enable the creation of interactive games as well aimed at understanding and assimilating the elements of the real environment represented in the model as well as socio-cultural elements associated with it.

Geolocating digital assets on the 3D physical space with Waygoo

When digital CH assets are associated with physical CH artefacts residing in a physical space that may be accessible as a museum, an archaeological site or a cultural space, it might be desirable to provide visitors of CH spaces with an augmented experience by associating the physical artefacts with their digital replicas (“digital twins”) by geocoding the digital replicas within the same space that their physical counterparts reside. On another circumstance, one may want to curate a collection of digital assets and provide a museum or exhibition-like experience related to a narrative experienced within a physical space. In either case, these digital assets must be associated with a physical space by geocoding them appropriately. In the context of the SYNTHESIS platform, this is achieved through the Waygoo platform1.

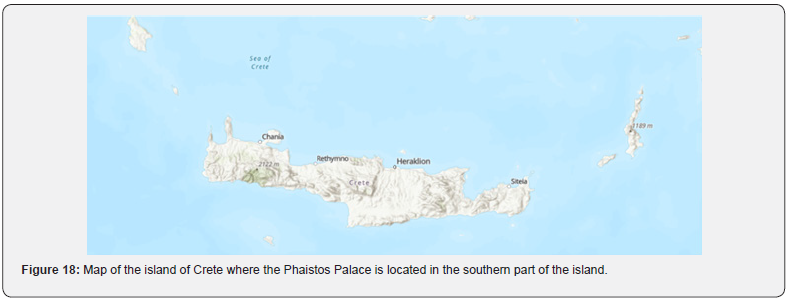

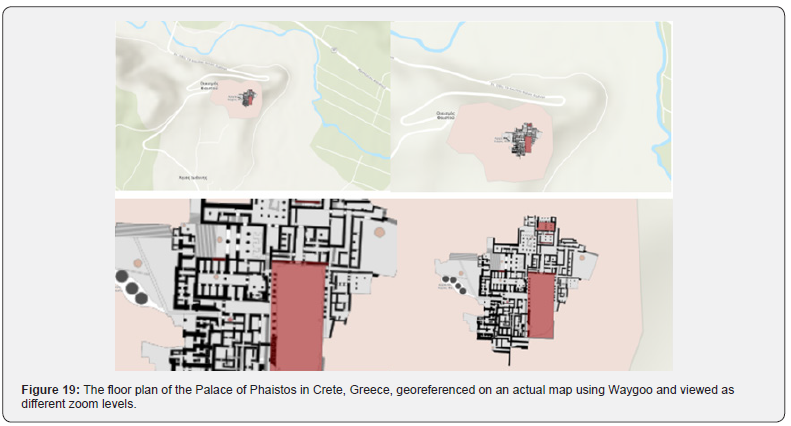

The Waygoo platform allows to geocode any 2D or 3D physical space on a digital map (in absolute or relative Mercator coordinates)(Figure 15-17). Using the geocoded digital space, the Waygoo platform allows in turn to geocode each digital asset with the appropriate coordinates the curator wants to associate with (“place it in”) the physical space. This way, the digital assets (content) is embedded in the physical space and becomes part of it allowing to be experienced in two different ways: (a) either by navigating in the virtual replica of the physical space; or (b) by being projected (the digital assets) in the physical space and retrieved while touring the actual physical space. In either case, the Waygoo platform supports two different automatic navigation modes: one on-demand based on origin-destination demarcation, and another based on a curated (and possibly personalized) narrative involving a succession of preselected geocoded space points according to the story-telling of the curated narrative. The outcome of a Waygoo geocoded and curated collection of digital assets can be experienced as both an iOS and Androic mobile application and downloaded from either the AppStore or Play Store. An example of the archaeological site of the Palace of Phaistos in Crete, Greece (Figure 18), that is used as a reference case study throughout this paper, is shown in the (Figure 19) below.

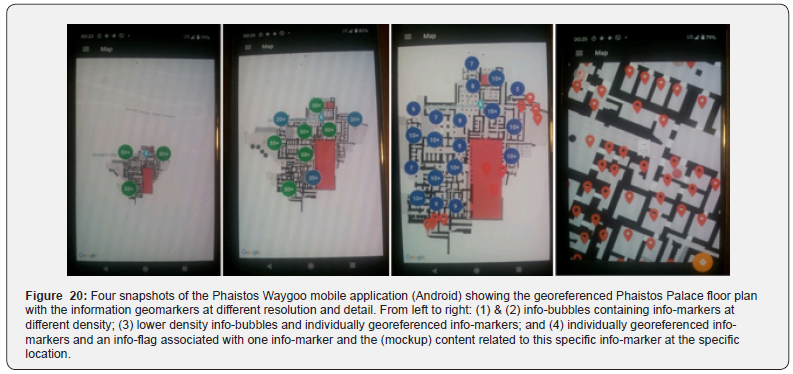

Content associated with the Palace of Phaistos, be it text, images, 3D objects and videos, is georeferenced suing Waygoo, overlayed on the floor plan with information geomarkers, and displayed using the Waygoo mobile application in Android and iOS. Preliminary screenshots from the Phaistos Waygoo mobile application in Android are shown in (Figure 20) below. The information geomarkers are shown at different resolution. Content and space navigation functionalities are available to the user of the application.

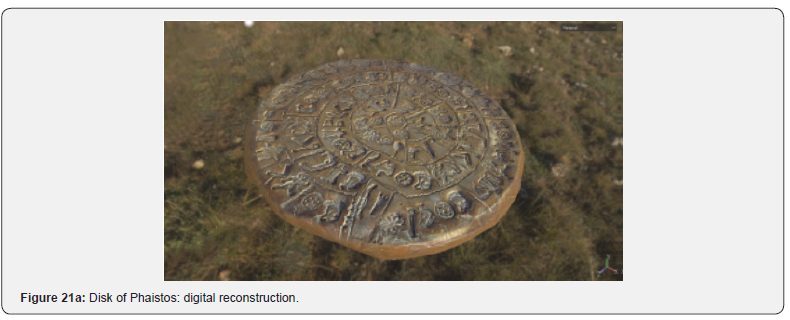

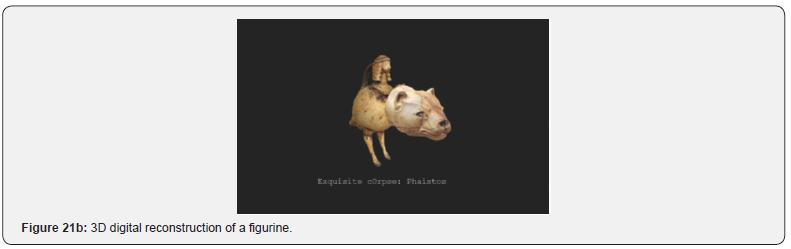

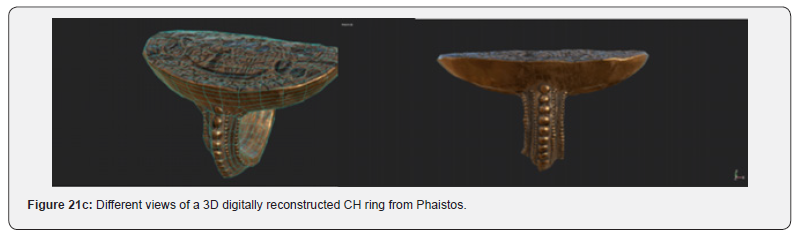

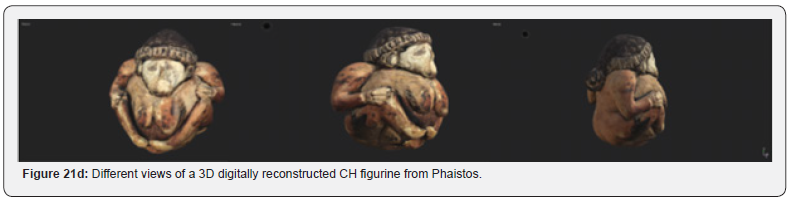

Examples of the content that is stored in the Waygoo database and can be viewed and experienced by the user of Waygoo Phaistos mobile application on and off the site of the Palace of Phaistos itself are given in (Figure 21a-21d) below. All CH artefacts and digital assets related to the Place of Phaistos are georeferenced with respect to the specific spatial coordinates within the complex of the Palace.

Summary

In this paper we presented the SYNTHESIS Platfrom that is being developed at the Integrated Systems Laboratory (ISL) of NCSR Demokritos. The Platform is one of three platforms that are under development at ISL for the creation and digital reconstruction of CH assets, the storage and maintenance of the digital assets, the georeferencing of the digital assets in the virtual and physical space, the visualization of the geocoded digital assets with the Waygoo Platform [7], the curation of the digital CH assets and the creation of narratives with the AFIGISSI platform [8], and the interactive dynamic visualization of digital content with the COSMOS platform [9]. SYNTELESSIS, AFIGISSI and COSMOS constitute a trilogy of technological platforms for advanced digitization and curation of digital CH assets.

Acknowledgement

This research project was partially sponsored by the Operational Programme “Competitiveness, Entrepreneurship and Innovation” (NSRF 2014-2020) and co-financed by Greece and the European Union (European Regional Development Fund) under action SYNTELESIS “Innovative Technologies and Applications based on the Internet of Things (IoT) and the Cloud Computing” with grant number MIS 5002521/ 2016-2020 [10].

References

- Palyvou, Clairy (2009) Bronze age architectural traditions in the Eastern Mediterranean: Diffusion and Diversity, Munich 2008, Conference Proceedings edition, p. 116.

- Halbherr, Pernier L (1900-1904) & Doro Levi (1950-1971) Excavations / Restorations: Italian School of Archeology.

- Τsakanika-Theohari Eleftheria (2006) The structural role of wood in the masonry of the palace-type buildings of Minoan Crete. PhD diss, NTUA, Athens,

- Τsakanika-Theohari Eleftheria (2008) The constructional analysis of timber load bearing systems as a tool for interpreting Aegean Bronze Age architecture, Munich Paper.

- Stelios CA Thomopoulos, Adam Doulgerakis, Maria Bessa & Konstantinos Dimitros, Giorgos Farazis, et al. (2016) DICE: Digital Immersive Cultural Environment. Springer, Cham, Euro-Mediterranean Conference, pp. 758-777.

- George Farazis, Christina Thomopoulos, Christos Bourantas, Sofia Mitsigkola, & Stelios CA Thomopoulos (2019) Digital approaches for public outreach in cultural heritage: The case study of iGuide Knossos and Ariadne's Journey. Digital Applications in Archaeology and Cultural Heritage, Elsevier Publ, 15(e00126).

- Stelios CA Thomopoulos (2019) NARRATION: An integrated system of managing and editing digital content and producing personalized and collaborative narratives, Proceedings of the 3rd Panhellenic Congress of Digitisation of Cultural Heritage, EUROMED 2019 - Culture, Education, Research, Innovation, Digital Technologies, Tourism, University of West Attica Conference Center - Campus 2, Athens, Greece, pp. 25-27.

- Stelios CA Thomopoulos, Tsimpiridis P, Theodorou EI, Xristopoulou X (2019) Cultural Osmosis - Mythology & Art Proceedings of the 3rd Panhellenic Congress of Digitization of Cultural Heritage, EUROMED 2019 - Culture, Education, Research, Innovation, Digital Technologies, Tourism, University of West Attica Conference Center - Campus 2, Athens, Greece, pp. 25-27.

- SYNTELESIS Innovative Technologies and Applications based on the Internet of Things (IoT) and the Cloud Computing with grant number MIS 5002521/ 2016-2020.