Survey of Kinect v2 Applied to Radiotherapy Patient Positioning

Emily DiGiovanna1, Michael Lamba2, and Peter Sandwall3*

1Department of Physics, University of New Orleans, USA

2College of Medicine, University of Cincinnati, USA

3OhioHealth-Mansfield, USA

Submission: November 16, 2017; Published: November 30, 2017

*Corresponding author: Peter Sandwall, OhioHealth-Mansfield, Radiation Oncology, 335 Glessner Ave, Mansfield, OH 44903, USA, Email: pasandwall@gmail.com

How to cite this article: Emily DiGiovanna, Michael Lamba, Peter Sandwall. Survey of Kinect v2 Applied to Radiotherapy Patient Positioning. Canc Therapy & Oncol Int J. 2017; 8(1): 555730. DOI: 10.19080/CTOIJ.2017.08.555730

Abstract

Radiotherapy delivers high doses of radiation to localized areas necessitating precise motion management and positioning verification. Microsoft Kinect v2 (Kv2) is an affordable Red Green Blue Depth (RGB-D) camera that uses advanced time of flight technology with potential application for patient set up verification of radiotherapy treatments. This readily available and relatively inexpensive technology has opportunity for development as an affordable solution for patient set up verification in developing countries. This report outlines necessary information and provides useful sources for beginning a radiotherapy Kv2 project. There are extensive resources available for both versions of the Kinect making it difficult to isolate the pertinent information. The goal is to reduce the learning curve associated with beginning a Kv2 radiotherapy project.

A literature review was conducted and relevant information distilled in review format. Easy to follow charts, explanations, and links guide readers to relevant information for a comprehensive understanding of advanced concepts: Overview of applications in radiotherapy; Time of flight; Stereo vision; Mathematical concepts such as matrices and quaternions; Align RT and Kv2 limitations. Table’s list resources to guide readers toward sources intended to shorten the time needed to familiarize with Kv2 concepts; relevant mathematics, available programs and technology relating to patient set up verification and Kv2. Numerous resources are provided to supply readers with an organized approach to start a Kv2 radiotherapy project.

Introduction

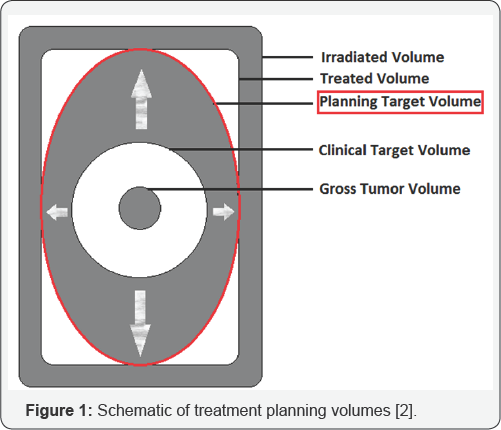

The Kinect version 2 (Kv2) (Microsoft, Redmond, WA) is an economical time of flight (TOF) technology with potential for application to patient positioning verification in radiotherapy. In radiotherapy the patient is initially positioned during the simulation computed tomography (CT) scan, which is then used to create a treatment plan. The treatment plan is designed to deliver tumoricidal dose to a planning target volume (PTV), which encompasses the gross disease with an added margin to account for setup uncertainties. Once a treatment plan is approved, patients return for multiple treatment fractions over a period of days or weeks. Replicating precise patient positioning between fractions is critical to ensure accurate and effective delivery of the approved treatment plan. A misalignment could result in unwanted irradiation of healthy tissue and under-dose portions of the target volume. Improvements in daily setup accuracy, may allow for reduction of the PTV margin, thus increasing healthy tissue sparing [1] (Figure 1).

Technological improvements allow for millimeter precision in patient positioning. Ideally, patient positioning verification systems should assess 6 degrees of freedom (DOF) due to an increase in use of 6 DOF robotic tables entering the market [2-6]. Positioning verification systems need to be efficient, replicable, and easy to implement for therapists who are responsible for daily patient set up and immobilization. Technical factors that contribute to setup uncertainties include: inter and intra-fraction motion, imaging misalignment, and mechanical uncertainties such as isocenter and alignment lasers [1]. Optical tracking is a non- invasive patient positioning and verification technique that reduces radiation exposure from on-board CT imaging [7]. Align RT (Vision RT Ltd., London, UK) is a leading technology in optical tracking; however, cost is a significant barrier for implementation in low and middle income countries (LMIC) leaving opportunity for development of alternate optical tracking technologies. The potential for an affordable patient verification application utilizing the Kv2 in LMIC provides the motivation for this work.

Kv2 hardware includes infrared emitters, depth sensors, audio microphone arrays, and HD color cameras. Multiple avenues have been explored evaluating the Kinect for radiotherapy. Applications include: methods to increase patient participation in the treatment process [4], treatment set up [8-10] intrafraction motion management [7,11-13] respiratory motion and deep inspiration breath hold [10, 14-18] collision detection [7, 19] and techniques for camera quality assurance and calibration [7,20]. Starting a project with the Kv2 presents one with an overwhelming abundance of information. The purpose of this paper is to provide the reader with a background on patient positioning in radiotherapy and bridge the gap to begin a Kv2 project with no prior experience. This report outlines how to start an image guided surface tracking project utilizing the Kv2. Beginning with a review of radiotherapy as it pertains to a Kv2 project, followed with a discussion of the mathematical concepts, survey of existing programs, and concluding with a comparison of technology used in Align RT and Kv2.

Getting started with the Microsoft Kinect Software Development Kit (SDK)

The SDK can be intimidating to new or inexperienced programmers; however, it is a good place to start to learn about the Kv2 and its features. To download the SDK, there are specific details, system requirements, and installation instructions. Microsoft provides this information at the following URL, https://www.microsoft.com/en-us/ download/details.aspx?id=44561. For accessing built-in basic applications, identify the Microsoft SDK root directory, located within is the bin sub-directory. With the device connected, opening any of the applications will display live feed from the sensor(s). The SDK provides libraries and source code for these applications. SDK applications include: Audio Basics, Body Basics, Color Basics, Controls Basics, Coordinate Mapping Basics, Depth Basics, Discrete Gesture Basics, Face Basics, HD ace Basics, Infrared Basics, Kinect Fusion Explorer. Applications can be installed from within the SDK Browser by opening “Samples.” Source code can be accessed through the \samples\managed sub-directory, within are compiled and uncompiled code. The reference library is found within the \assemblies sub-directory, it is essential to add the reference library to the application when writing code for new applications.

Mathematical Concepts

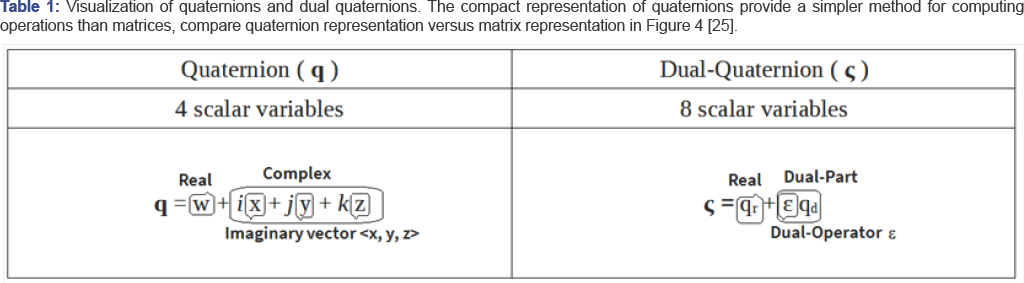

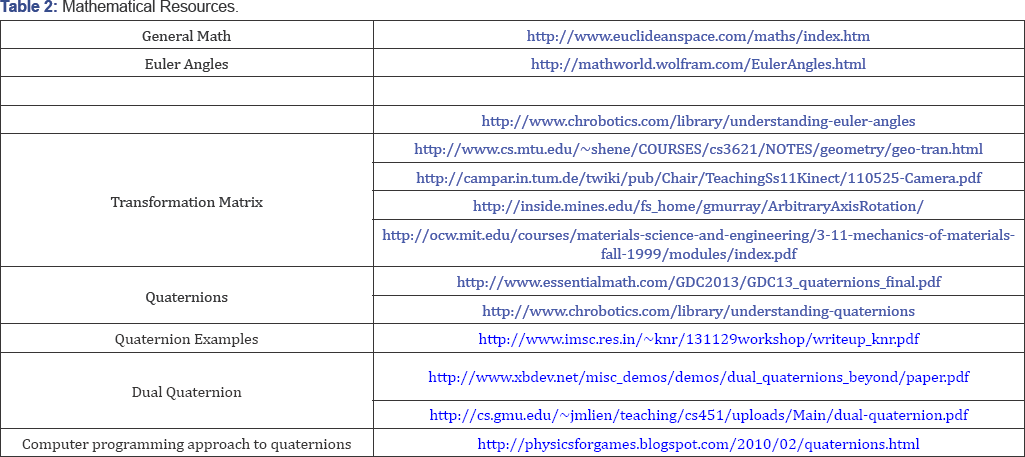

Common mathematical approaches used in Kinect applications include quaternions, dual quaternions, axis/ angle, Euler angles, and matrices. 2 which contain available resources for further understanding of these concepts.

Euler Angles

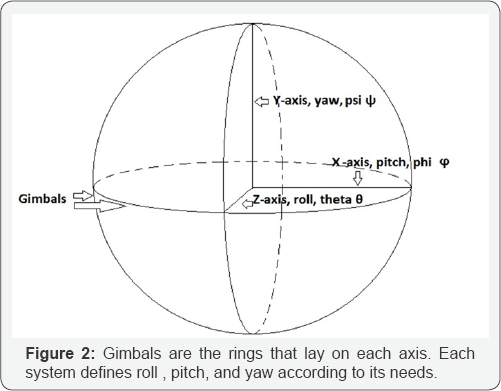

Euler angles are three angles rotated about defined axes [21]. Euler Angles are relatively easy to conceptualize; however, they can suffer from gimbal lock [22]. Gimbal lock is the elimination of the third degree of freedom when one gimbal rotates enough aligning the remaining two gimbals, so only 2 degrees of freedom are available for rotation [22] (Figure 2). Euler angles rotate about each axis; try this with your pen using the figure as a guide.

Axis/Angle

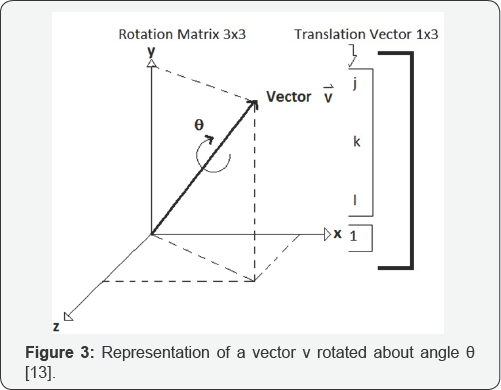

Axis/angle is an axis represented as a vector rotated about an angle [23], (Figure 3). They are easy to visualize and intuitive to understand, but are difficult to combine and therefore need to be converted into another representation that is easier to concatenate like matrices or quaternions [23].

Matrices

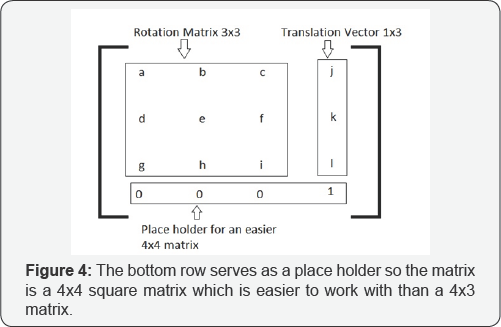

Matrices are arrays of numbers or information set up in rectangular format. Transformation matrices are typically represented by a 4x4 matrix consisting of a 3x3 rotation matrix with a 3x1 translation vector and a 1x4 bottom row expansion that has {0,0,0,1} reflecting the vector’s direction while the length remains unchanged [23]. These are commonly used to represent the same rotational and translational information as the other methods, for visualization of these concepts (Figure 4).

Quaternions and Dual Quaternions

Quaternions are composed of 4 values (1 real part and 3 complex parts) and are used to rotate vectors [24]. Quaternions do not represent translation, only rotation. Dual quaternions combine rotation and translation in a compact representation [25], known as a single state variable, composed of a real part and a dual part that contains a dual operator [23]. Table 1 for visualization of quaternions and dual-quaternions. Quaternions and dual quaternions are preferred for programming applications. Quaternions avoid the singularity, a point at which a function takes an infinite value, of gimbal lock associated with Euler Angles [23], and are simpler to perform mathematical operations on (e.g. multiplication) than matrices (Table 2).

Other Mathematics

Other mathematical approaches include Fourier transforms [26,27] Horn's Algorithm [28,29] Simultaneous Localization and Mapping (SLAM) Algorithm [30,31] and Iterative Closest Point (ICP) Algorithm [32]. The ICP algorithm provides a method for three dimensional alignment of point sets [32]. SLAM addresses the issue of trying to locate the camera trajectory relative to a mapped environment and are used for dense real-time surface reconstruction [30]. Horn's Algorithm utilizes unit quaternions to address the least-squares problem of absolute orientation [28]. The least squares method is used to determine and minimize the difference between a baseline and reference data set. 13 Absolute orientation refers to recovering the transformation of two coordinate systems as it applies to photogrammetry (making measurements from photographs) [28]. In a subsequent publication Horn et al. [29] addressed the same problem, utilizing orthonormal matrices (3x3 rotation matrices) to represent rotation.

Fourier transforms are used for image and audio analysis by converting data into the frequency domain [26]. Digital signal processing takes digitized signals and allows for fast mathematical computations of these functions [27]. Fourier transforms are often computed with Matlab, a programming tool used for machine learning, signal processing, image processing, computer vision, robotics, and more [33]. Matlab software has an adapter for version 1 and 2 of the Kinect, which can be used to process raw data from the sensors [34].

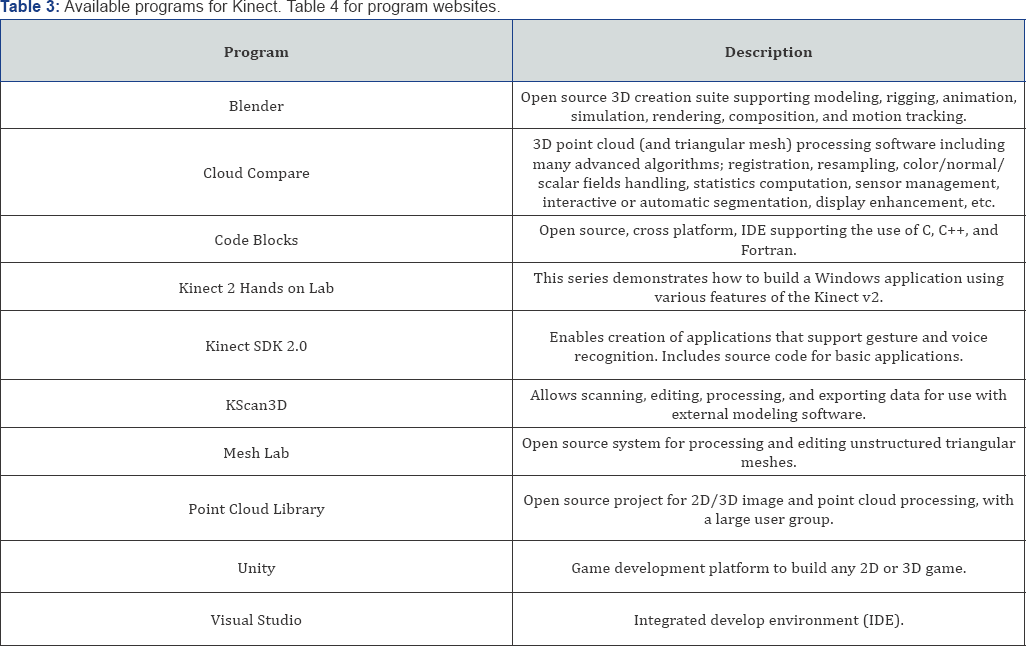

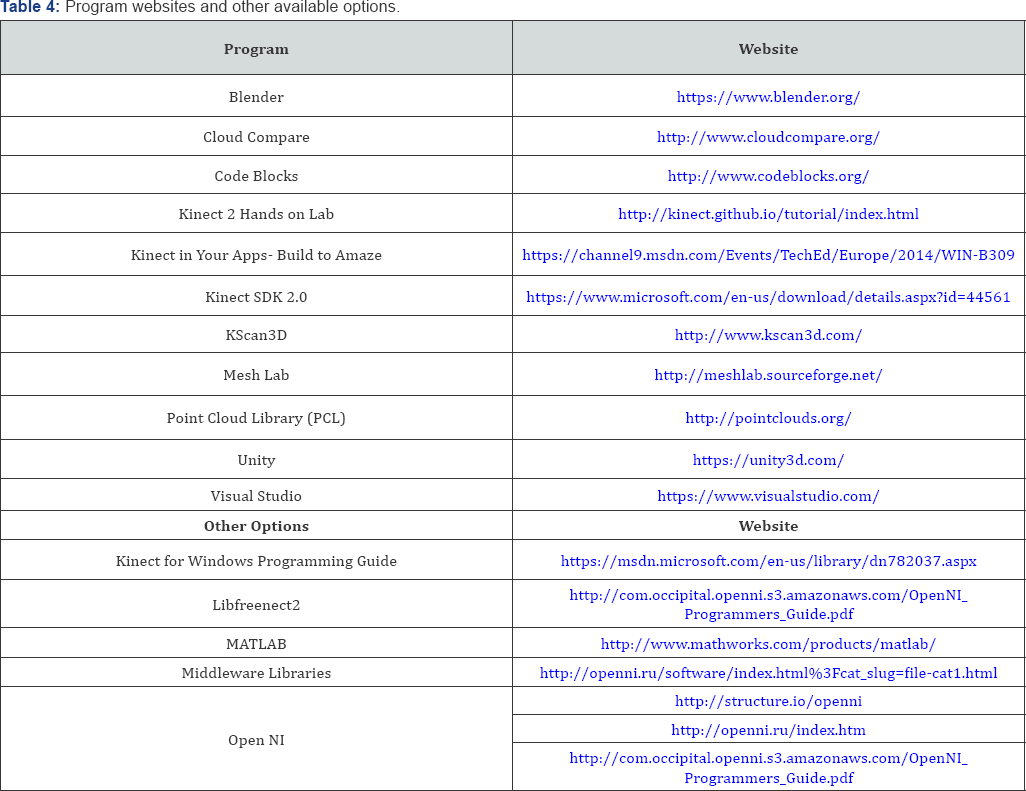

Survey of Existing programs Compatible with the Kv2

Programs have been developed to foster the creation of applications for the Microsoft Kinect using C# and C++ programming languages. Many applications are intended for KV1 or KV2, but do not function for both. The Kv2 uses continuous wave Time of Flight (TOF) for depth measurements versus the Kv1 which used structured light. Tables 3 & 4 contain a sampling of available programs that work with the Kv2. It is important to note, literature does not always specify which version of the Kinect is being referenced, and ways to differentiate include: observation of photos of the Kinect device; date of publication; Kv1 is typically stated without version e.g. Kinect Sensor, Microsoft Kinect.

Comparison of Align RT versus kv2

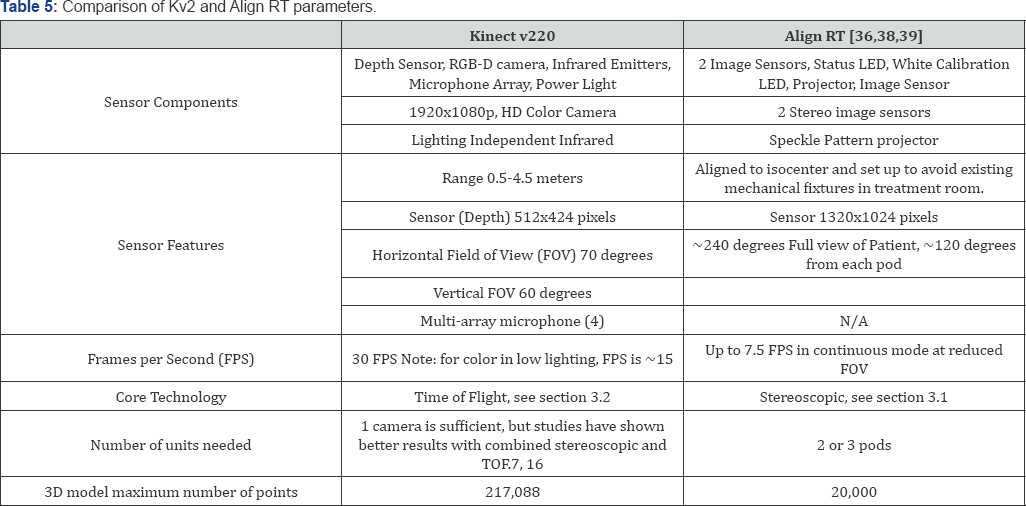

Stereoscopic

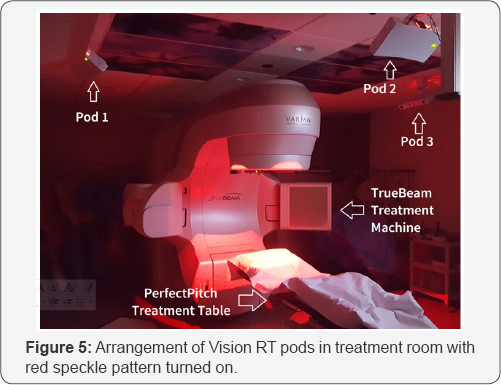

Align RT technology uses stereoscopic cameras to provide information from multiple sensors separated by a distance, similar to human eyes [35]. Two or three align RT pods (mounted units containing the imaging hardware) are positioned around the treatment couch and out of the collision path of the accelerator, (Figure 5) [36] Align RT pods work by projecting a pseudo-random speckled pattern on the patient, providing “objects” or “virtual tattoos,” [37]. Approximately 20,000 points, for the sensors to detect triggering the correspondence process [38]. Correspondence problems result from point matching between multiple sensor viewpoints. The purpose of theses “virtual tattoos” is to create texture points that provide the necessary information for the program to match and align corresponding points viewed from both cameras via triangulation [35]. This concatenation is what provides the 3 dimensional integrated surface model used to assist in position verification [39] (Figure 5).

Time of Flight

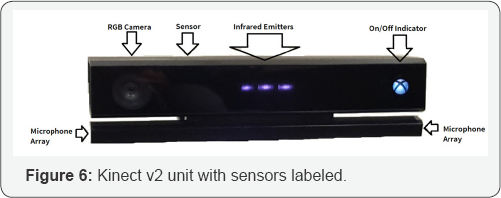

Kv2 uses continuous wave (CW) modulation time of flight (TOF) technology to collect information about a scene, Figure 6 the Kv2 unit. Two predominate time of flight methods are pulsed and continuous wave modulation (CW). Pulsed modulation is not discussed in this paper as the KV2 uses CW modulation, more information can be found in a review by Laukkanen [40]. CW modulation gathers depth values by calculating the time it takes for emitted infrared light (850nm wavelength) waves to reflect off a surface and return to a depth sensor which calculates the distance based on the phase shift of the reflected light waves [41,42]. The Kv2 emits square wave infrared light illuminating the scene with photons that will be read by pixels in the depth sensor [42]. Kv2's time of flight technology is discussed in more detail by Zennaro [42] Lachat [43] and Lau [44] (Figure 6) (Table 5).

Calibration and Quality Assurance

Radiotherapy depends on quality assurance (QA) and calibration of the treatment units and associated technology. Quality control ensures that equipment is checked daily, monthly, and annually verifying that equipment performance meets defined standards [45]. Align RT utilizes a calibration plate to perform monthly calibration, which accounts for distance, and daily QA [36]. There are no universally acceptable methods for Kinect calibration; however, published works have described several methods for effectively performing calibration for the Kinect cameras. Two separate calibrations are needed since range imaging cameras utilize both geometric and depth information [20]. A geometric calibration, scaling, typically uses a planar checkerboard, while the depth calibration can be performed by repeatedly positioning the Kv2 at a blank wall at known distance away. To align Kv2s in the treatment room, Santhanam et al. [7] used a custom made camera alignment jig with colored spheres aligned to isocenter allowing the Kv2s to determine the room coordinate system.

Limitations

Kv2 and Align RT each have their own software and mechanical limitations. Align RT uses stereo vision techniques for imaging procedures. As discussed above, section 3.3, calibration is a necessary step for stereo vision to convert depth estimates to true depth values [46]. Disadvantages for stereoscopic cameras include multiple computationally expensive steps, dependence on scene illumination and surface texturing, and stereo correspondence [38,47] Limitations of the Kv2 include: temperature drift [20,40,41,48,] systematic distance error, depth in homogeneity (flying pixel effect) [41,48], multi-path effects [40,41,48] noise [13,20, 40,48] and object reflectivity/ dynamic scenery/amplitude errors [20,40,41,48].

Conclusion

This report reviewed resources for understanding the Kv2 and Align RT including relevant mathematical concepts, existing programs for the Kv2, applications in radiotherapy, surveyed existing programs, reviewed relevant mathematical concepts, and published applications in radiotherapy. We hope the preceding information will be helpful for individuals embarking on a radiotherapy project with the Kv2.

Acknowledgement

This paper was written at the conclusion of a 10-week undergraduate internship sponsored by Precision Physics, Inc. in honor of the late Howard R. Elson, PhD who had a profound impact on the lives of countless radiation physicists, oncologists, therapists, and nuclear medicine technologists. Work was performed at TriHealth Cancer Institute (Cincinnati, Ohio) with the support of U.S. Oncology staff members: Sarah McKee, Christopher Gerrein, Jared Weatherford, Kelli Ross, and Nicholas Lavini.

References

- F Khan (2007) Treatment Planning in Radiation Oncology, (2nd edn), Lippincott Williams & Wilkins, Philadelphia, USA, p. 16-18.

- "Prescribing, recording and reporting photon beam therapy,” Internal Commission on Radiation Units and Measurements, Report 50, ICRU (1993), Bethesda, MD, USA.

- Varian Medical Systems Inc., (2016) "Perfect Pitch 6 degrees of freedom couch: Advanced robotics for accurate patient setup. CA, USA.

- Elekta (2016) “HexaPOD™ evo RT System: Robotic patient positioning system” Stockholm, Sweden.

- Accuray Inc. (2016) "Robocouch: Patient Positioning System.

- CIVCO Medical Solutions (2016) "Protura™ Robotic Patient Positioning System.”

- Santhanam AP, Min Y, Kupelian P, Low D (2016) "Multi-Kinect v2 Camera Based Monitoring System for Radiotherapy Patient Safety.” Stud Health Technol Inform 220: 352-358.

- S Bauer, J Wasza, S Hasse, N Marosi, J Hornegger (2011) "Multi-modal surface registration for marker less initial patient setup in radiation therapy using microsoft’s Kinect sensor.” Computer Vision Workshops (ICCV Workshops), 2011 IEEE International Conference on, IEEE, (2011), Spain.

- T Mullaney, B Yttergren, E Stolterman (2014) "Positional Acts: Using a Kinect™ Sensor to Reconfigure Patient Roles within Radiotherapy Treatment,” Proceedings of the 8th International Conference on Tangible, Embedded and Embodied Interaction, ACM.

- F Tahavori, E Adams, M Dabbs, L Aldridge, N Liversidge E, et al. (2015) "Combining marker-less patient setup and respiratory motion monitoring using low cost 3D camera technology.” SPIE Medical Imaging, International Society for Optics and Photonics, USA.

- M Aoki, M Ono, Y Kamikawa, K Kozono, H Arimura, et al. (2013) "Development of Real-Time Patient Monitoring System Using Microsoft Kinect,” World Congress on Medical Physics and Biomedical Engineering, 39: 1456-1459.

- PJ Noonan, J Howard, WA Hallett, RN Gunn (2015) "Repurposing the Microsoft Kinect for Windows v2 for external head motion tracking for brain PET. Phys Med Biol 60(22): 8753-8766.

- JT Eagle (2014) Kinect Surface Tracking. Master of Science Thesis.

- Sebastian Bauer, Jakob Wasza, J Hornegger (2012) Photometric estimation of 3D surface motion fields for respiration management, Bildverarbeitungfur die Medizin, pp. 105-110.

- J Xia, R Alfredo Siochi (2012) "A real-time respiratory motion monitoring system using KINECT: proof of concept.” Med phys 39(5): 2682-2685.

- M Heß, F Büther, F Gigengack, M Dawood, K Schäfers (2015) A dual- Kinect approach to determine torso surface motion for respiratory motion correction in PET, Med phys 42(5): 2276-2286.

- M Alnowami, B Alnwaimi, F Tahavori, M Copland, K Wells (2012) A quantitative assessment of using the Kinect for Xbox360 for respiratory surface motion tracking, SPIE Medical Imaging. International Society for Optics and Photonics, 8316, CA, USA.

- F Tahavori, M Alnowami, K Wells (2014) Marker-less respiratory motion modeling using the Microsoft Kinect for Windows, SPIE Medical Imaging, International Society for Optics and Photonics, 9036, CA, USA.

- L Padilla, E Pearson, C Pelizzari (2015) Collision prediction software for radiotherapy treatments. Med Phys 42(11): 6448-6456.

- E Lachat, H Macher, T Landes, P Grussenmeyer (2015) Assessment and Calibration of a RGB-D Camera (Kinect v2 Sensor) Towards a Potential use for Close-Range 3D Modeling, Remote Sensing 7(10): 1307013097.

- E Weisstein (2016) Euler Angles. Math World-A Wolfram Web Resource.

- CH Robotics (2016) Understanding Euler Angles.

- Ben Kenwright (2012) A Beginners Guide to Dual-Quaternions: What they are, How they work, and How to use them for 3D character Hierarchies. WSCG, p. 1-10.

- J Van Verth (2016) Understanding Quaternions. Game Developers Conference.

- B Kenwright (2012) Dual-Quaternions: From Classical Mechanics to Computer Graphics and Beyond.

- R Fisher, S Perkins, A Walker, E Wolfart (2016) Fourier Transform.

- Analog Devices (2016) A Beginner's Guide to Digital Signal Processing (DSP).

- Berthold KP Horn (1987) Closed-form solution of absolute orientation using unit quaternions. JOSA A4(4): 629-642.

- B Horn, H Hilden, S Negahdaripour (1988) Closed-form solution of absolute orientation using orthonormal matrices. JOSA A5(7): 11271135.

- R Salas-Moreno, R Newcombe, H Strasdat, P Kelly, A Davison (2013) SLAM++: Simultaneous Localisation and Mapping at the Level of Objects, Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 1352-1359.

- O Wasenmuller, M Meyer, D Stricker (2016) CoRBS: Comprehensive RGB-D benchmark for SLAM using Kinect v2. Winter Conference on Applications of Computer Vision (WACV).

- N Gelfand, L Ikemoto, S Rusinkiewicz, M Levoy (2003) Geometrically stable sampling for the ICP algorithm. 3-D Digital Imaging and Modeling, Proceedings. Fourth International Conference on. IEEE, USA.

- Math Works (2016) The Language of Technical Computing. Matlab, p. 6.

- Y Zhu, T Shu (2016) Kinect v2 Toolbox Manual.

- L Li (2014) Time-of-Flight Camera- An Introduction. Technical White Paper, India.

- Varian Medical Systems (2015) Optical Surface Monitoring System. OM101, Training Document.

- Vision RT (2016) Core Technology.

- T Waldron (2009) "External Surrogate Measurement and Internal Target Motion: Photogrammetry as a Tool in IGRT,” ACMP Annual Meeting 10059, USA.

- C Bert, K Metheany, K Doppke, G Chen (2005) A phantom evaluation of a stereo-vision surface imaging system for radiotherapy patient setup. Medical Physics 32(9): 2753-2762.

- M Laukkanen (2015) Performance Evaluation of Time-of-Flight Depth Cameras. Master of Science Thesis.

- H Sarbolandi, D Lefloch, A Kolb (2015) Kinect Range Sensing: Structured-Light versus Time-of-Flight Kinect. Computer Vision and Image Understanding 139: 58.

- S. Zennaro, M Munaro, S Milani, P Zanuttigh, A Bernardi, et al. (2015) Performance Evaluation of the 1st ad 2nd Generation Kinect for Multimedia Applicaitons, 2015 IEEE International Conference on Multimedia and Expo (ICME), USA.

- E Lacaht, H Macher, M Mittet, T Landes, P Grussenmeyer (2015) First Experiences with Kinect V2 Sensor for Close Range 3D Modeling, The International Archives of the Photogrammetry, Remote Sensing, and Spatial Information Sciences, XL-5 (W4), p. 93-100.

- D Lau (2016) The Science Behind Kinects or Kinect 1.0 versus 2.0, Gamasutra: The Art & Business of Making Games.

- T Pawlicki, P Dunscombe, A Mundt, P Scalliet (2011) Quality and Safety in Radiotherapy, Taylor & Boca Raton, FL, Francis, p. 4.

- C Schaller (2011) Time-of-Flight- A New Modality for Radiotherapy, Diss. University of Erlangen-Nuremberg, Germany

- V Castaneda, N Navab (2011) Time-of-Flight and Kinect Imaging, Kinect Programming for Computer Vision, p. 53.

- L Valgma (2016) 3D reconstruction using Kinect v2 camera, Diss. Tartu Ulikool, Estonia, Europe.